Earlier this year, AMD launched its Zen 3 based desktop processor solutions with integrated graphics. Marketed as the Ryzen 5000G family, these processors are the latest offering to combine AMD’s high-performing Zen 3 cores with tried-and-tested Vega 8 graphics, all built on TSMC’s 7nm process. As desktop processors, AMD made them available to system builders for a few months, allowing the company to keep track of stock levels and have a consistent demand during a high-demand phase for silicon. But on August 5th, they will be made available to buy at retail, and we’ve got the most important models to test.

AMD actually came to market with its Zen 3-based integrated graphics silicon back in December 2020. The Cezanne silicon, with eight Zen 3 cores and up to Vega 8 graphics, was first earmarked for laptop use. We saw it come to market in that fashion for the 15 W and 45 W segments, and tested it in the ASUS ROG Flow X13 laptop in a 35 W variation, paired with a discrete graphics card.

AMD Ryzen 9 5980HS Cezanne Review: Ryzen 5000 Mobile Tested

As a mobile chip in both low power and high power segments, it had to do duty as both a processor and graphics at 15 W, but mainly as a processor at 45 W powering a discrete graphics card. For the desktop processors, AMD cranks up the power even more to 65 W, where it is expected to perform either as a good CPU powering a GPU, or as an integrated graphics solution and do everything.

The Ryzen 5000G processor series is an upgrade over last year’s Ryzen 4000G processor series. AMD co-designed both of these processor families to re-use key parts of the chip design, enabling a fast time-to-market and quicker upgrade cycle for AMD’s partners (OEMs), like Dell, HP, Lenovo, and others. The biggest re-use between the two families is the graphics, which has Vega 8 graphics on both, and although there’s a hefty frequency bump for 5000G in mobile, the desktop parts actually see a decline. We expect AMD to have its latest RDNA 2 graphics in its G processors next time around, but for now it stays the same because that helps expedite the design for these processors.

AMD CEO, Dr. Lisa Su, with Cezanne silicon

AMD CEO, Dr. Lisa Su, with Cezanne silicon

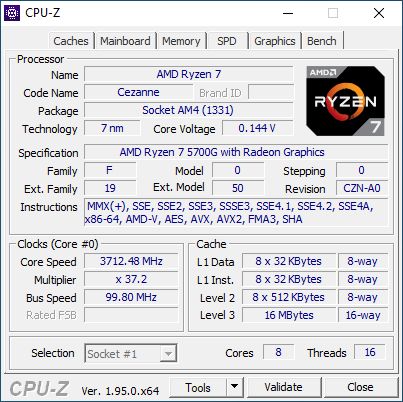

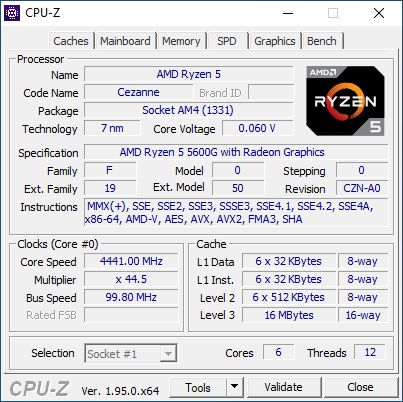

The major difference between the 4000G and 5000G processors is that the new hardware uses eight of AMD’s latest Zen 3 CPU cores, which is an upgrade over the eight Zen 2 cores from last year. The highlight is the +19% raw performance uplift when comparing the two at the same frequency. The second major difference is that the 4000G processors never technically came to retail (but we reviewed them anyway), while AMD is making the Ryzen 7 5700G and Ryzen 5 5600G as individual products that customers can buy.

Under the hood, there are a few more key changes that enthusiasts will be interested in. The 8-core Zen 3 design doubles the L3 cache per core, but also combines it into a single 16 MB L3 cache structure. This enables any of the eight cores to access the full cache, reducing latency to main memory (from 4 MB to 16 MB). The previous design had two clusters of four Zen 2 cores, so while it still had 8 cores, each cluster only had access to 4 MB of L3 cache. This is of sizable importance when it comes to workloads that sit in the 4 MB to 16 MB memory space, such as integrated graphics gaming and discrete graphics.

The new processor is 180 mm2 in size, compared to 156 mm2 of the last generation, but still fits into the same socket. It contains 10.7 billion transistors, which is up from 9.8 billion. This means an effective decrease in transistor density, although we know that Zen 3 cores are slightly larger than Zen 2 cores, and some additional security measures have been added.

There are six desktop processors in this family, and two of them are coming to store shelves.

| AMD Ryzen 5000G Series APUs | |||||||

| AnandTech | Core / Thread |

Base Freq |

Turbo Freq |

GPU CUs |

GPU Freq |

PCIe * |

TDP |

| Ryzen 5000G | |||||||

| Ryzen 7 5700G | 8 / 16 | 3800 | 4600 | 8 | 2000 | 16+4+4 | 65 W |

| Ryzen 7 5700GE | 8 / 16 | 3200 | 4600 | 8 | 2000 | 16+4+4 | 35 W |

| Ryzen 5 5600G | 6 / 12 | 3900 | 4400 | 7 | 1900 | 16+4+4 | 65 W |

| Ryzen 5 5600GE | 6 / 12 | 3400 | 4400 | 7 | 1900 | 16+4+4 | 35 W |

| Ryzen 3 5300G | 4 / 8 | 4000 | 4200 | 6 | 1700 | 16+4+4 | 65 W |

| Ryzen 3 5300GE | 4 / 8 | 3600 | 4200 | 6 | 1700 | 16+4+4 | 35 W |

| *PCIe lanes on the SoC are listed in GFX+Chipset+Storage | |||||||

The top part is a Ryzen 7 5700G, featuring eight cores and sixteen threads, with a base frequency of 3.8 GHz and a turbo frequency of 4.6 GHz. The Vega 8 graphics runs at 2000 MHz, and we get 16 lanes of PCIe 3.0 for graphics, plus another four for storage. TDP of the chip is rated at 65 W, although in most motherboards the Package Power Tracking will bump power up to 88 W. The Ryzen 7 5700G will have an MSRP of $359.

The second part is a Ryzen 5 5600G, featuring six cores and twelve threads, with a base frequency of 3.9 GHz and a turbo frequency of 4.6 GHz. It drops down in graphics to Vega 7, running at 1900 MHz, but has the same PCIe 3.0 and TDP settings as the Ryzen 7. AMD has indicated that the 5600G should retail for $259.

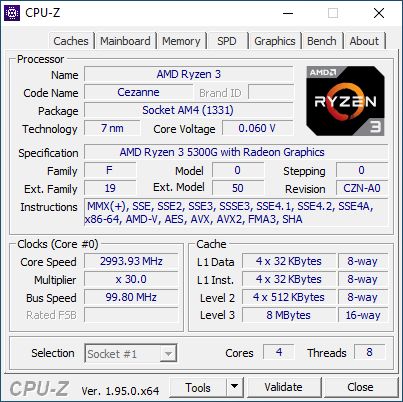

We’re also testing a third part in this review, the Ryzen 3 5300G, which sits near the bottom of the stack. With only four cores and eight threads, up to 4.2 GHz turbo and Vega 6 graphics running at 1700 MHz, this processor contains only half the L3 cache (8 MB total) of the other two. It sounds like it would make a nice $150 processor if it came to retail, and users can pick this processor up on eBay, but it currently it sits at $272 plus shipping, making it more expensive than the 5600G. Nonetheless, as we had tested Ryzen 7/5/3 from the 4000G series, we wanted to compare up against the 5000G to see if this is a line AMD might consider going beyond OEM deployment.

This is ultimately why a staggered launch from laptop to desktop over the course of eight months allows AMD to pitch where its desktop integrated graphics processors should sit in the marketplace. These Cezanne processors use Zen 3 CPU cores, for example, whereas the older ones had Zen 2, Zen+, and Zen before it. What makes these ones different this time around is that AMD is cutting the Ryzen 3 from retail, but the Ryzen 7 at the high-end is now available at retail. The only one that has been consistent is Ryzen 5, and we can compare the Ryzen 5 processors over the years:

| Ryzen 5 APUs (65W) | |||||||||

| AnandTech | Cores | Base Freq |

Turbo Freq |

L3 MB |

PCIe | GPU | DDR4 | Price | |

| Zen3 7nm |

Ryzen 5 5600G | 8 / 16 | 3900 | 4400 | 16 | 3.0 x24 | Vega8 | 3200 | $259 |

| Zen2 7nm |

Ryzen 5 4650G | 8 / 16 | 3700 | 4200 | 4+4 | 3.0 x24 | Vega8 | 3200 | OEM |

| Zen+ 12nm |

Ryzen 5 3400G | 4 / 8 | 3700 | 4200 | 4 | 3.0 x8 | Vega11 | 2933 | $149 |

| Zen 14nm |

Ryzen 5 2400G | 4 / 8 | 3600 | 3900 | 4 | 3.0 x8 | Vega11 | 2933 | $169 |

AMD has kept the Vega graphics through all four generations, but moved down from the silicon having Vega 11 on 12nm to Vega 8 on 7nm – AMD said that this was because of density increases and finding the right balance, but also the uplift in frequency and power efficiencies the new process node provided.

As it stands, these two new processors at retail fill out AMD's retail offerings, at least down to $259. One of the key benefits is that these two new processors are cheaper than the CPU-only offerings, but also both come with appropriate coolers when they are run at their default power modes. The difference between getting and G series processor is that the PCIe lanes are only PCIe 3.0, and the L3 cache is halved, but there’s integrated graphics. Given that modern graphics cards don’t even need PCIe 4.0 levels of bandwidth, we have to see if the cache difference and any frequency differences are worth the price difference.

| AMD Ryzen 5000 CPU vs APU Comparisons | |||||||||

| AnandTech | Core / Thread |

Base Freq |

Turbo Freq |

GPU CUs |

GPU Freq |

PCIe |

L3 MB |

TDP | SEP |

| Ryzen 7 | |||||||||

| Ryzen 7 5800X | 8 / 16 | 3800 | 4700 | - | - | 4.0 x24 | 32 | 105 W | $449 |

| Ryzen 7 5700G | 8 / 16 | 3800 | 4600 | 8 | 2000 | 3.0 x24 | 16 | 65 W | $359 |

| Ryzen 5 | |||||||||

| Ryzen 5 5600X | 6 / 12 | 3700 | 4600 | - | - | 4.0 x24 | 32 | 65 W | $299 |

| Ryzen 5 5600G | 6 / 12 | 3900 | 4400 | 7 | 1900 | 3.0 x24 | 16 | 65 W | $259 |

The key thing with the Ryzen 7 comparison is the TDP difference – why the frequency might only be 100 MHz change, at 105 W TDP (or 120W PPT), it will keep its turbo better.

Chipset Support

AMD has confirmed that X570, B550, and A520 motherboards will support the new 5000G processors. X470 and B450 motherboards might also be supported, but that depends on the motherboard manufacturer. AMD recommends a BIOS with AGESA version 1203b for full performance.

In this article, we will be testing the Ryzen 7 5700G, Ryzen 5 5600G, and Ryzen 3 5300G on our test suite, covering raw CPU performance, integrated graphics performance, but also performance when paired with a discrete GPU.

| AnandTech | Example Processors |

Motherboard | DRAM | PSU | SSD |

| AMD | |||||

| Zen3 APU | Ryzen 7 5700G | GIGABYTE X570 Aorus I Pro (F34) |

ADATA 32 GB DDR4-3200 |

Corsair AX860i |

Crucial MX500 2 TB |

| Ryzen 5 5600G | |||||

| Ryzen 3 5300G | |||||

| Zen2 APU | Ryzen 7 4750G | GIGABYTE X570 Aorus I Pro (F30a) |

ADATA 64 GB DDR4-3200 |

Corsair AX860i |

Crucial MX500 2 TB |

| Ryzen 5 4650G | |||||

| Ryzen 3 4350G | |||||

| Zen+ APU | Ryzen 5 3400G | GIGABYTE X570 Aorus I Pro (F30a) |

ADATA 64 GB DDR4-2933 |

Corsair AX860i |

Crucial MX500 2 TB |

| Zen APU | Ryzen 5 2400G | GIGABYTE X570 Aorus I Pro (F30a) |

ADATA 64 GB DDR4-2933 |

Corsair AX860i |

Crucial MX500 2 TB |

| Intel | |||||

| Rocket Lake | Core i7-11700K | ASUS Maximus XIII Hero |

BIOS 0610 |

Corsair AX1600i |

Crucial MX500 2TB |

| Broadwell | Core i7-5775C | GIGABYTE Z97X-UD5H (F10) |

Geil Veloce 16 GB DDR3-1600 |

Antec HCP 1250W |

Crucial MX500 2 TB |

| Core i5-5675C | |||||

| Tiger Lake | Core i7-1185G7 | Intel Reference |

32 GB LPDDR4X |

Integrated | Samsung PCIe 3.0 |

Many thanks to...

We must thank the following companies for kindly providing hardware for our multiple testbeds. Some of this hardware is not in this testbed specifically, but is used in other testing.

| Hardware Providers for CPU and Motherboard Reviews | |||

| Sapphire RX 460 Nitro |

NVIDIA RTX 2080 Ti |

Crucial SSDs | Corsair PSUs |

|

|

|

|

| G.Skill DDR4 | ADATA DDR4 | Silverstone Coolers |

Noctua Coolers |

|

|

|

|

A big thanks to ADATA for the AD4U3200716G22-SGN modules for this review. They're currently the backbone of our AMD testing.

Read on for the full review.

The nature of reporting processor power consumption has become, in part, a dystopian nightmare. Historically the peak power consumption of a processor, as purchased, is given by its Thermal Design Power (TDP, or PL1). For many markets, such as embedded processors, that value of TDP still signifies the peak power consumption. For the processors we test at AnandTech, either desktop, notebook, or enterprise, this is not always the case.

Modern high-performance processors implement a feature called Turbo. This allows, usually for a limited time, a processor to go beyond its rated frequency. Exactly how far the processor goes depends on a few factors, such as the Turbo Power Limit (PL2), whether the peak frequency is hardcoded, the thermals, and the power delivery. Turbo can sometimes be very aggressive, allowing power values 2.5x above the rated TDP.

AMD and Intel have different definitions for TDP, but are broadly speaking applied the same. The difference comes to turbo modes, turbo limits, turbo budgets, and how the processors manage that power balance. These topics are 10000-12000 word articles in their own right, and we’ve got a few articles worth reading on the topic.

- Why Intel Processors Draw More Power Than Expected: TDP and Turbo Explained

- Talking TDP, Turbo and Overclocking: An Interview with Intel Fellow Guy Therien

- Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics

- Intel’s TDP Shenanigans Hurts Everyone

In simple terms, processor manufacturers only ever guarantee two values that are tied together - when all cores are running at base frequency, the processor should be running at or below the TDP rating. All turbo modes and power modes above that are not covered by warranty. Intel kind of screwed this up with the Tiger Lake launch in September 2020, by refusing to define a TDP rating for its new processors, instead of going for a range. Obfuscation like this is a frustrating endeavor for press and end-users alike.

However, for our tests in this review, we measure the power consumption of the processor in a variety of different scenarios. These include workflows, real-world image-model construction, and others as appropriate. These tests are done as comparative models. We also note the peak power recorded in any of our tests.

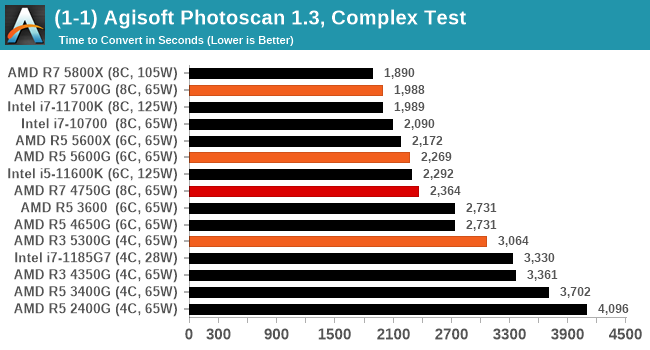

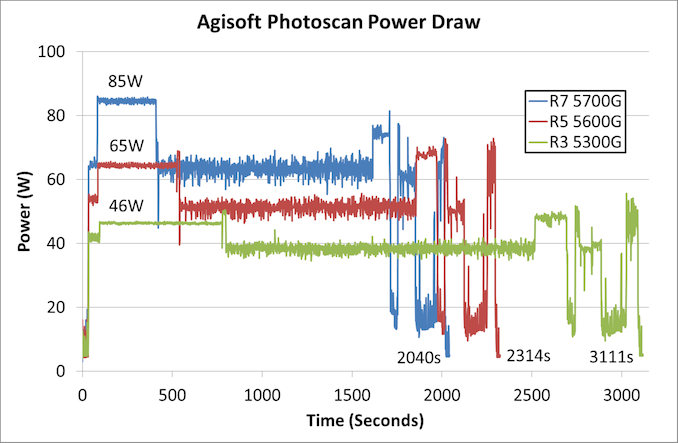

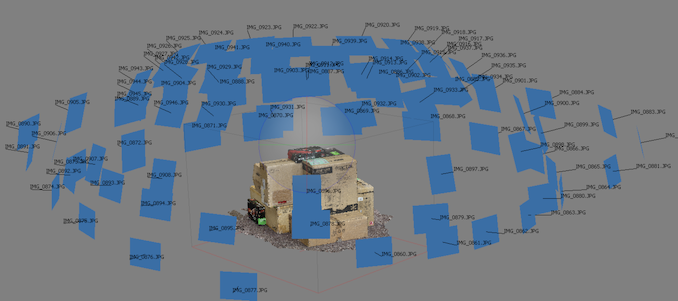

First up is our image-model construction workload, using our Agisoft Photoscan benchmark. This test has a number of different areas that involve single thread, multi-thread, or memory limited algorithms.

For the Ryzen 7 5700G, the most power-hungry part of the test is right at the beginning, where we’re seeing peaks of 85 W. For the 5600G, that first section goes to 65 W, but the peaks actually occur here near the end of the test. The 5300G also has peaks later in the test, but that first section is the lowest, running only at 46 W.

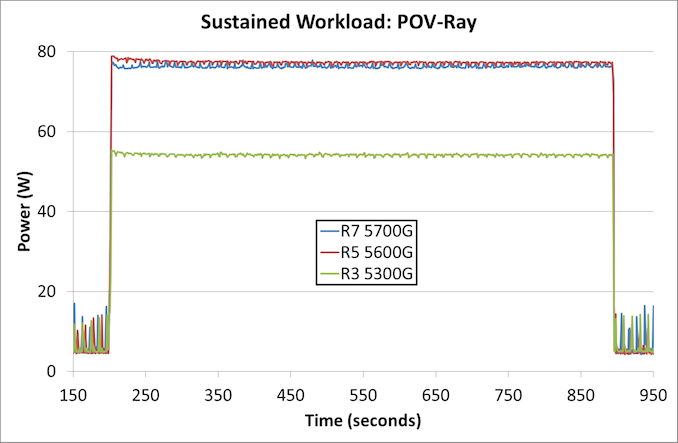

The second test is a sustained rendering workload.

In this instance, the Ryzen 3 5300G is nearer 55 W with a sustained workload over 10 minutes, while the Ryzen 5 and Ryzen 7 sit just below 80 W.

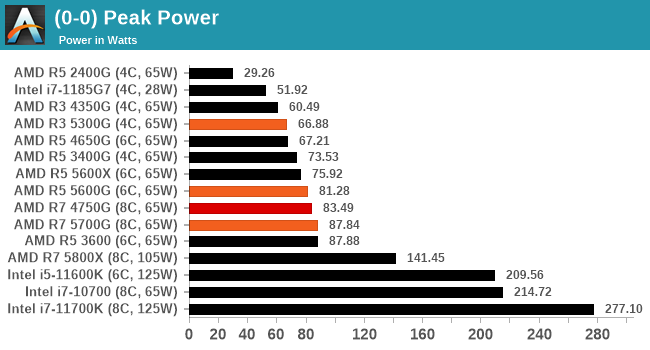

For peak power, we report the highest value observed from any of our benchmark tests.

While all three processors have a TDP rating of 65 W, by default on AMD systems the Package Power Tracking, which is the limiting factor here, is 88 W. The Ryzen 7 is practically at that value, while the Ryzen 5 just goes a smidge over 80 W. The Ryzen 3 on the other hand only matches its TDP in the worst-case scenario.

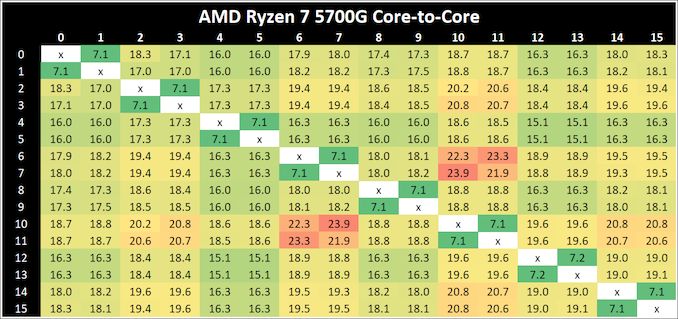

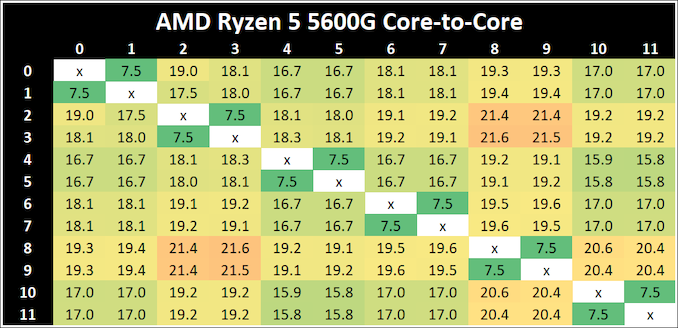

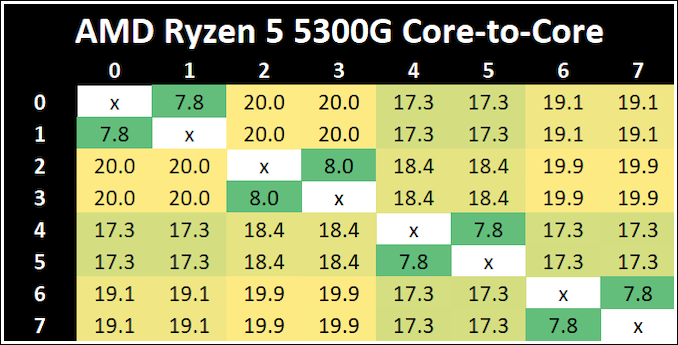

Core-to-Core Latency

As the core count of modern CPUs is growing, we are reaching a time when the time to access each core from a different core is no longer a constant. Even before the advent of heterogeneous SoC designs, processors built on large rings or meshes can have different latencies to access the nearest core compared to the furthest core. This rings true, especially in multi-socket server environments.

But modern CPUs, even desktop and consumer CPUs, can have variable access latency to get to another core. For example, in the first-generation Threadripper CPUs, we had four chips on the package, each with 8 threads, and each with a different core-to-core latency depending on if it was on-die or off-die. This gets more complex with products like Lakefield, which has two different communication buses depending on which core is talking to which.

If you are a regular reader of AnandTech’s CPU reviews, you will recognize our Core-to-Core latency test. It’s a great way to show exactly how groups of cores are laid out on the silicon. This is a custom in-house test built by Andrei, and we know there are competing tests out there, but we feel ours is the most accurate to how quick an access between two cores can happen.

The Ryzen 7 5700G has the quickest thread-to-thread latency, however does offer a single slowest core-to-core latency. But compared to the 4000G series, having a single unified L3 cache reduces to core-to-core latency a good amount. The Ryzen 5 5300G has the slowest intracore latency, but the fastest average core-to-core.

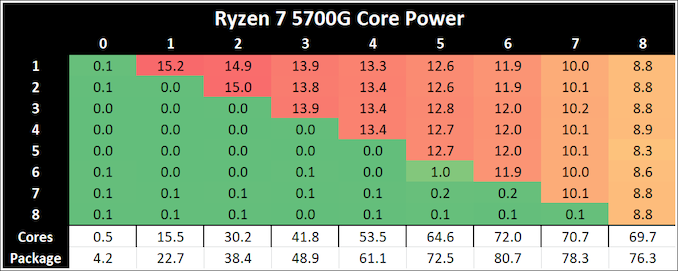

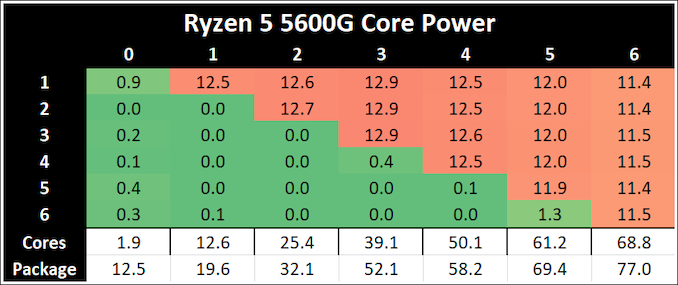

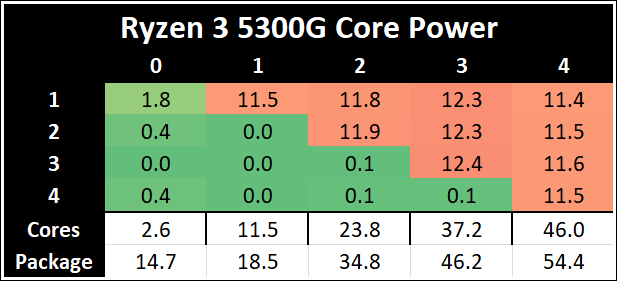

Per-Core Power

One other angle to examine is how much power each core is drawing with respect to the rest of the chip. In this test, we run POV-Ray with a specific thread mask for a minute, and take a power reading 30 seconds into the test. We output the core power values from all cores, and compare them to the reported total package power.

The peak per-core power is shown as 15.2 W when one core is loaded on the Ryzen 7 5700G, and that comes down to ~8.8W when all cores are loaded. Interestingly this processor uses more power when six cores are loaded.

The Ryzen 5 5300G starts at 11.5 W for a single core, but then moves up to 12.3 W when three cores are loaded. It comes back down to 11.5 W when all four cores are loaded, but this ensures a consistent frequency (the 5300G has a 4.2 GHz Base and 4.4 GHz Turbo, explaining the small variation in loading).

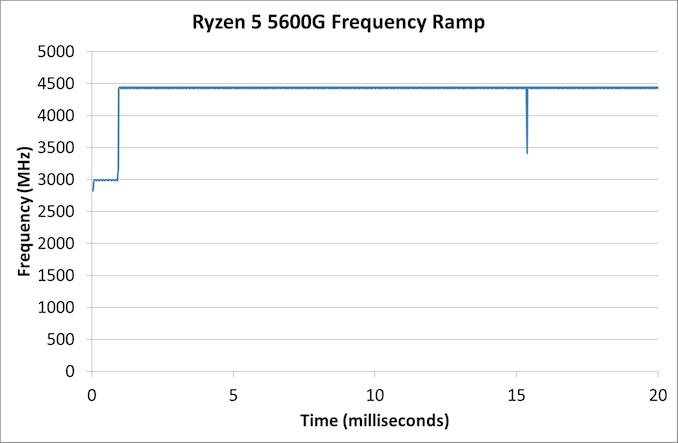

Frequency Ramping

Both AMD and Intel over the past few years have introduced features to their processors that speed up the time from when a CPU moves from idle into a high-powered state. The effect of this means that users can get peak performance quicker, but the biggest knock-on effect for this is with battery life in mobile devices, especially if a system can turbo up quick and turbo down quick, ensuring that it stays in the lowest and most efficient power state for as long as possible.

Intel’s technology is called SpeedShift, although SpeedShift was not enabled until Skylake.

One of the issues though with this technology is that sometimes the adjustments in frequency can be so fast, the software cannot detect them. If the frequency is changing on the order of microseconds, but your software is only probing frequency in milliseconds (or seconds), then quick changes will be missed. Not only that, as an observer probing the frequency, you could be affecting the actual turbo performance. When the CPU is changing frequency, it essentially has to pause all compute while it aligns the frequency rate of the whole core.

We wrote an extensive review analysis piece on this, called ‘Reaching for Turbo: Aligning Perception with AMD’s Frequency Metrics’, due to an issue where users were not observing the peak turbo speeds for AMD’s processors.

We got around the issue by making the frequency probing the workload causing the turbo. The software is able to detect frequency adjustments on a microsecond scale, so we can see how well a system can get to those boost frequencies. Our Frequency Ramp tool has already been in use in a number of reviews.

In our test, the Ryzen 5 5600G jumps from 2700 to the turbo frequency in around a millisecond.

Our previous set of ‘office’ benchmarks have often been a mix of science and synthetics, so this time we wanted to keep our office section purely on real-world performance.

Agisoft Photoscan 1.3.3: link

The concept of Photoscan is about translating many 2D images into a 3D model - so the more detailed the images, and the more you have, the better the final 3D model in both spatial accuracy and texturing accuracy. The algorithm has four stages, with some parts of the stages being single-threaded and others multi-threaded, along with some cache/memory dependency in there as well. For some of the more variable threaded workload, features such as Speed Shift and XFR will be able to take advantage of CPU stalls or downtime, giving sizeable speedups on newer microarchitectures.

For the update to version 1.3.3, the Agisoft software now supports command line operation. Agisoft provided us with a set of new images for this version of the test, and a python script to run it. We’ve modified the script slightly by changing some quality settings for the sake of the benchmark suite length, as well as adjusting how the final timing data is recorded. The python script dumps the results file in the format of our choosing. For our test we obtain the time for each stage of the benchmark, as well as the overall time.

Photoscan shows good gen-on-gen performance uplifts, with the 5700G on par with the 11700K despite being lower powered. Both the R7 and R5 APUs are within touching distance of their X counterparts, and we see a good performance jump from the 4000G series.

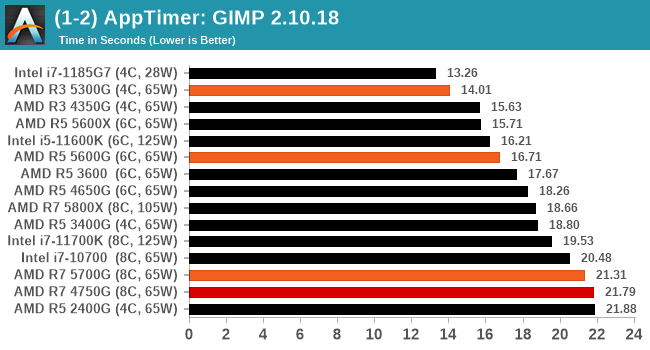

Application Opening: GIMP 2.10.18

First up is a test using a monstrous multi-layered xcf file to load GIMP. While the file is only a single ‘image’, it has so many high-quality layers embedded it was taking north of 15 seconds to open and to gain control on the mid-range notebook I was using at the time.

What we test here is the first run - normally on the first time a user loads the GIMP package from a fresh install, the system has to configure a few dozen files that remain optimized on subsequent opening. For our test we delete those configured optimized files in order to force a ‘fresh load’ each time the software in run. As it turns out, GIMP does optimizations for every CPU thread in the system, which requires that higher thread-count processors take a lot longer to run.

We measure the time taken from calling the software to be opened, and until the software hands itself back over to the OS for user control. The test is repeated for a minimum of ten minutes or at least 15 loops, whichever comes first, with the first three results discarded.

GIMP is a funny test where it gets harder the more cores you have - that's why the quad cores win here. However slow quad cores (like the 2400G still let you down. There seems to be minor gains here for the R5000 series with Zen 3 under the hood.

In this version of our test suite, all the science focused tests that aren’t ‘simulation’ work are now in our science section. This includes Brownian Motion, calculating digits of Pi, molecular dynamics, and for the first time, we’re trialing an artificial intelligence benchmark, both inference and training, that works under Windows using python and TensorFlow. Where possible these benchmarks have been optimized with the latest in vector instructions, except for the AI test – we were told that while it uses Intel’s Math Kernel Libraries, they’re optimized more for Linux than for Windows, and so it gives an interesting result when unoptimized software is used.

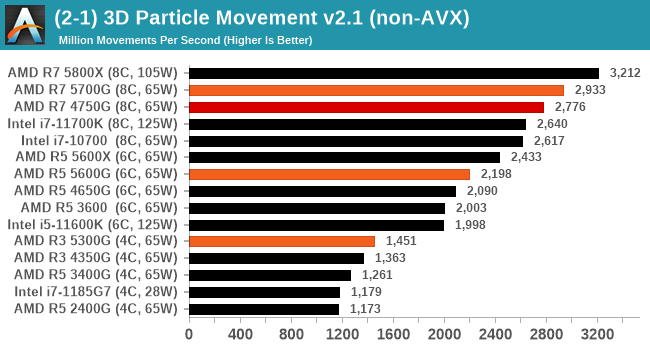

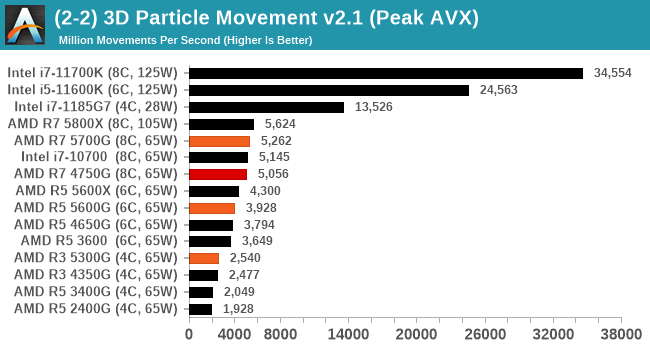

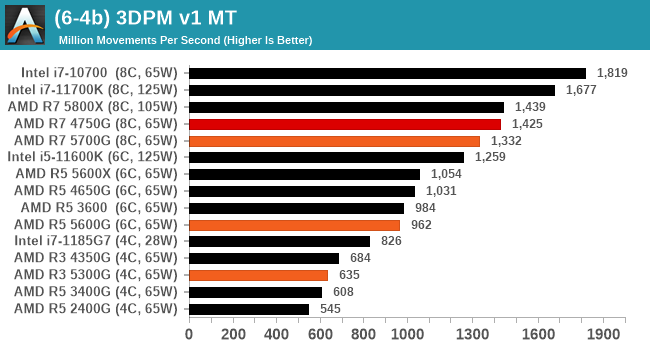

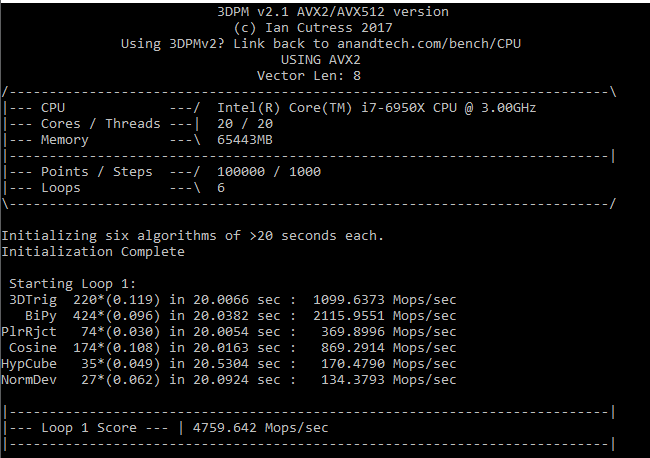

3D Particle Movement v2.1: Non-AVX and AVX2/AVX512

This is the latest version of this benchmark designed to simulate semi-optimized scientific algorithms taken directly from my doctorate thesis. This involves randomly moving particles in a 3D space using a set of algorithms that define random movement. Version 2.1 improves over 2.0 by passing the main particle structs by reference rather than by value, and decreasing the amount of double->float->double recasts the compiler was adding in.

The initial version of v2.1 is a custom C++ binary of my own code, and flags are in place to allow for multiple loops of the code with a custom benchmark length. By default this version runs six times and outputs the average score to the console, which we capture with a redirection operator that writes to file.

For v2.1, we also have a fully optimized AVX2/AVX512 version, which uses intrinsics to get the best performance out of the software. This was done by a former Intel AVX-512 engineer who now works elsewhere. According to Jim Keller, there are only a couple dozen or so people who understand how to extract the best performance out of a CPU, and this guy is one of them. To keep things honest, AMD also has a copy of the code, but has not proposed any changes.

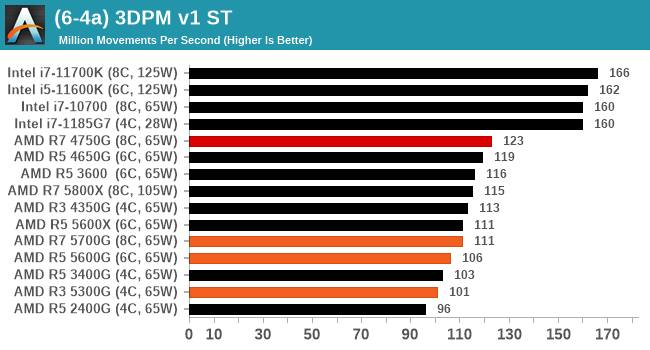

The 3DPM test is set to output millions of movements per second, rather than time to complete a fixed number of movements.

Ignoring the Peak AVX results which are heavily weighted in favor of AVX-512 enabled CPUs, with the non-AVX code we're seeing about a 5% performance gain on R5000G over R4000G.

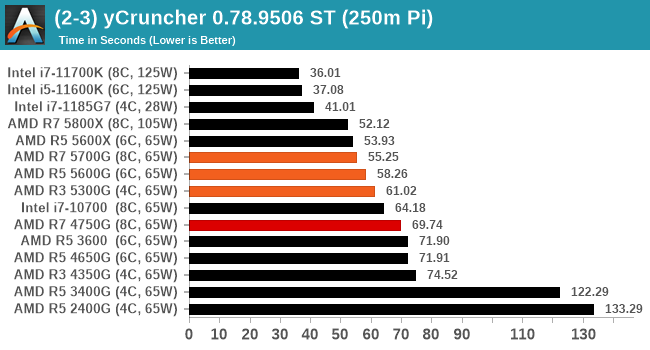

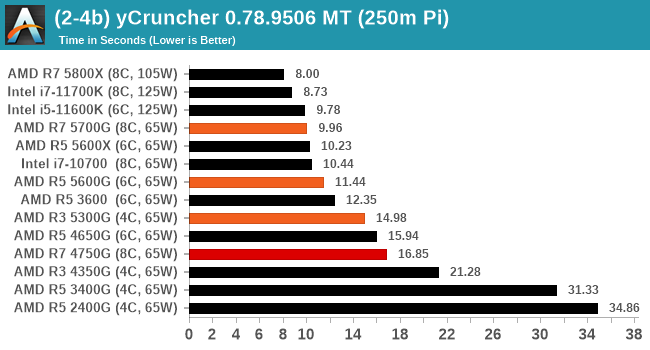

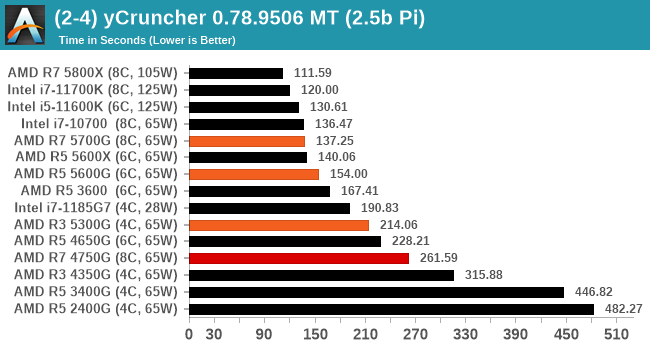

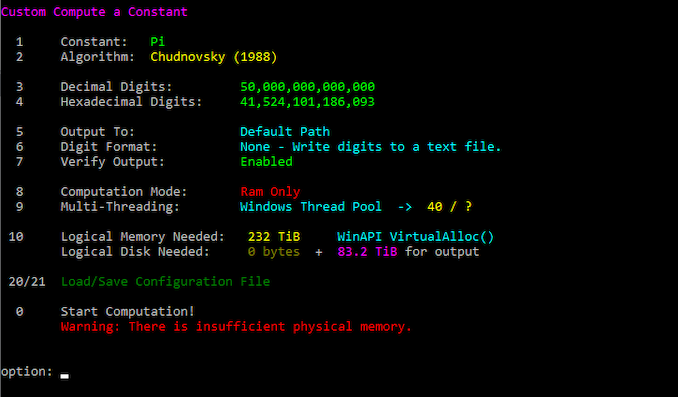

y-Cruncher 0.78.9506: www.numberworld.org/y-cruncher

If you ask anyone what sort of computer holds the world record for calculating the most digits of pi, I can guarantee that a good portion of those answers might point to some colossus super computer built into a mountain by a super-villain. Fortunately nothing could be further from the truth – the computer with the record is a quad socket Ivy Bridge server with 300 TB of storage. The software that was run to get that was y-cruncher.

Built by Alex Yee over the last part of a decade and some more, y-Cruncher is the software of choice for calculating billions and trillions of digits of the most popular mathematical constants. The software has held the world record for Pi since August 2010, and has broken the record a total of 7 times since. It also holds records for e, the Golden Ratio, and others. According to Alex, the program runs around 500,000 lines of code, and he has multiple binaries each optimized for different families of processors, such as Zen, Ice Lake, Sky Lake, all the way back to Nehalem, using the latest SSE/AVX2/AVX512 instructions where they fit in, and then further optimized for how each core is built.

For our purposes, we’re calculating Pi, as it is more compute bound than memory bound. In single thread mode we calculate 250 million digits, while in multithreaded mode we go for 2.5 billion digits. That 2.5 billion digit value requires ~12 GB of DRAM, and so is limited to systems with at least 16 GB.

The R3 5300G is crushing the R4000 series here, which is likely down to the unified L3 cache strcuture.

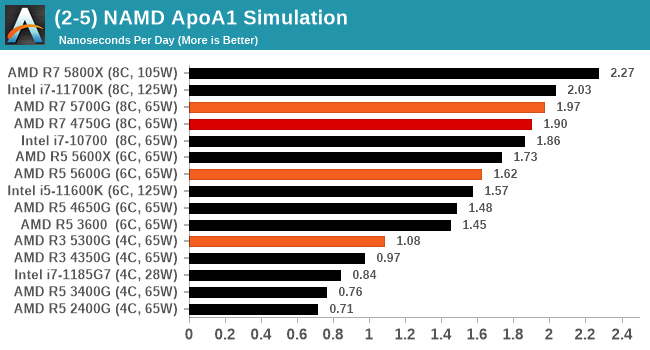

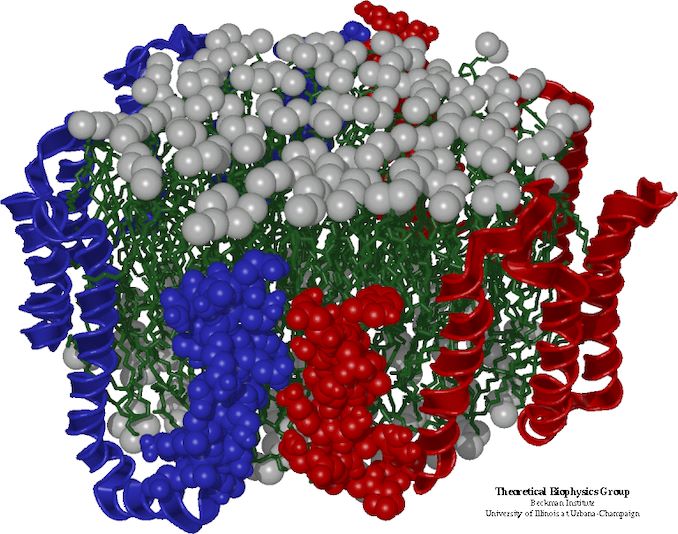

NAMD 2.13 (ApoA1): Molecular Dynamics

One of the popular science fields is modeling the dynamics of proteins. By looking at how the energy of active sites within a large protein structure over time, scientists behind the research can calculate required activation energies for potential interactions. This becomes very important in drug discovery. Molecular dynamics also plays a large role in protein folding, and in understanding what happens when proteins misfold, and what can be done to prevent it. Two of the most popular molecular dynamics packages in use today are NAMD and GROMACS.

NAMD, or Nanoscale Molecular Dynamics, has already been used in extensive Coronavirus research on the Frontier supercomputer. Typical simulations using the package are measured in how many nanoseconds per day can be calculated with the given hardware, and the ApoA1 protein (92,224 atoms) has been the standard model for molecular dynamics simulation.

Luckily the compute can home in on a typical ‘nanoseconds-per-day’ rate after only 60 seconds of simulation, however we stretch that out to 10 minutes to take a more sustained value, as by that time most turbo limits should be surpassed. The simulation itself works with 2 femtosecond timesteps. We use version 2.13 as this was the recommended version at the time of integrating this benchmark into our suite. The latest nightly builds we’re aware have started to enable support for AVX-512, however due to consistency in our benchmark suite, we are retaining with 2.13. Other software that we test with has AVX-512 acceleration.

The slower 5000G processors are +10% faster generationally, while the R7 is about 4% faster. They all sit behind the desktop counterparts though.

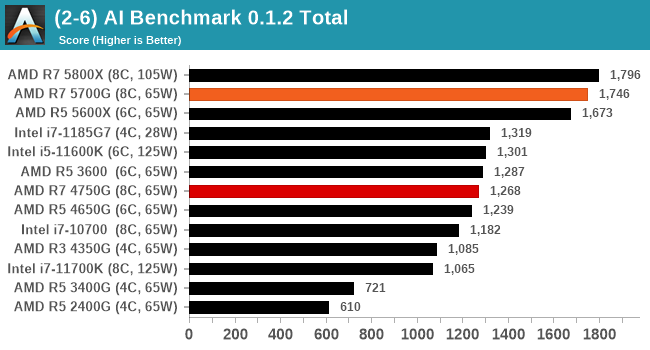

AI Benchmark 0.1.2 using TensorFlow: Link

Finding an appropriate artificial intelligence benchmark for Windows has been a holy grail of mine for quite a while. The problem is that AI is such a fast moving, fast paced word that whatever I compute this quarter will no longer be relevant in the next, and one of the key metrics in this benchmarking suite is being able to keep data over a long period of time. We’ve had AI benchmarks on smartphones for a while, given that smartphones are a better target for AI workloads, but it also makes some sense that everything on PC is geared towards Linux as well.

Thankfully however, the good folks over at ETH Zurich in Switzerland have converted their smartphone AI benchmark into something that’s useable in Windows. It uses TensorFlow, and for our benchmark purposes we’ve locked our testing down to TensorFlow 2.10, AI Benchmark 0.1.2, while using Python 3.7.6.

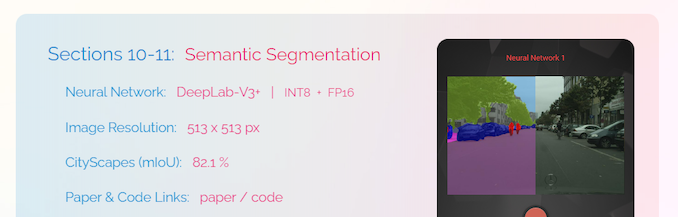

The benchmark runs through 19 different networks including MobileNet-V2, ResNet-V2, VGG-19 Super-Res, NVIDIA-SPADE, PSPNet, DeepLab, Pixel-RNN, and GNMT-Translation. All the tests probe both the inference and the training at various input sizes and batch sizes, except the translation that only does inference. It measures the time taken to do a given amount of work, and spits out a value at the end.

There is one big caveat for all of this, however. Speaking with the folks over at ETH, they use Intel’s Math Kernel Libraries (MKL) for Windows, and they’re seeing some incredible drawbacks. I was told that MKL for Windows doesn’t play well with multiple threads, and as a result any Windows results are going to perform a lot worse than Linux results. On top of that, after a given number of threads (~16), MKL kind of gives up and performance drops of quite substantially.

So why test it at all? Firstly, because we need an AI benchmark, and a bad one is still better than not having one at all. Secondly, if MKL on Windows is the problem, then by publicizing the test, it might just put a boot somewhere for MKL to get fixed. To that end, we’ll stay with the benchmark as long as it remains feasible.

Simulation and Science have a lot of overlap in the benchmarking world, however for this distinction we’re separating into two segments mostly based on the utility of the resulting data. The benchmarks that fall under Science have a distinct use for the data they output – in our Simulation section, these act more like synthetics but at some level are still trying to simulate a given environment.

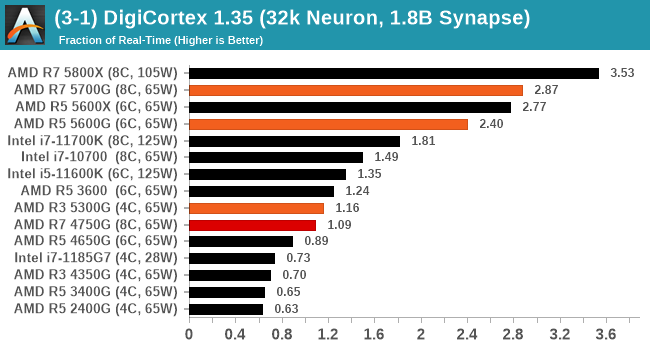

DigiCortex v1.35: link

DigiCortex is a pet project for the visualization of neuron and synapse activity in the brain. The software comes with a variety of benchmark modes, and we take the small benchmark which runs a 32k neuron/1.8B synapse simulation, similar to a small slug.

The results on the output are given as a fraction of whether the system can simulate in real-time, so anything above a value of one is suitable for real-time work. The benchmark offers a 'no firing synapse' mode, which in essence detects DRAM and bus speed, however we take the firing mode which adds CPU work with every firing.

The software originally shipped with a benchmark that recorded the first few cycles and output a result. So while fast multi-threaded processors this made the benchmark last less than a few seconds, slow dual-core processors could be running for almost an hour. There is also the issue of DigiCortex starting with a base neuron/synapse map in ‘off mode’, giving a high result in the first few cycles as none of the nodes are currently active. We found that the performance settles down into a steady state after a while (when the model is actively in use), so we asked the author to allow for a ‘warm-up’ phase and for the benchmark to be the average over a second sample time.

For our test, we give the benchmark 20000 cycles to warm up and then take the data over the next 10000 cycles seconds for the test – on a modern processor this takes 30 seconds and 150 seconds respectively. This is then repeated a minimum of 10 times, with the first three results rejected. Results are shown as a multiple of real-time calculation.

The Zen3 processors have been doing well in the Digicortex test due to the rearrangement of load/store ports with better memory access. We're seeing >2x gen-on-gen gains here.

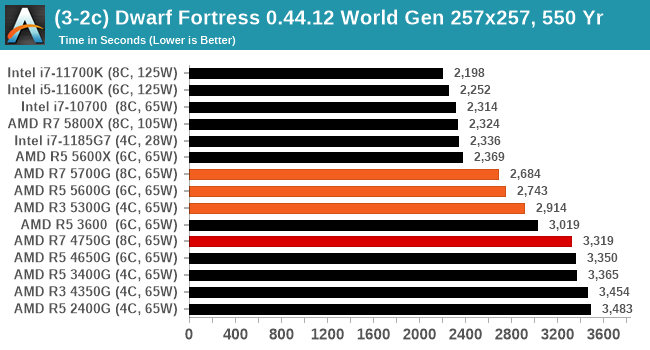

Dwarf Fortress 0.44.12: Link

Another long standing request for our benchmark suite has been Dwarf Fortress, a popular management/roguelike indie video game, first launched in 2006 and still being regularly updated today, aiming for a Steam launch sometime in the future.

Emulating the ASCII interfaces of old, this title is a rather complex beast, which can generate environments subject to millennia of rule, famous faces, peasants, and key historical figures and events. The further you get into the game, depending on the size of the world, the slower it becomes as it has to simulate more famous people, more world events, and the natural way that humanoid creatures take over an environment. Like some kind of virus.

For our test we’re using DFMark. DFMark is a benchmark built by vorsgren on the Bay12Forums that gives two different modes built on DFHack: world generation and embark. These tests can be configured, but range anywhere from 3 minutes to several hours. After analyzing the test, we ended up going for three different world generation sizes:

- Small, a 65x65 world with 250 years, 10 civilizations and 4 megabeasts

- Medium, a 127x127 world with 550 years, 10 civilizations and 4 megabeasts

- Large, a 257x257 world with 550 years, 40 civilizations and 10 megabeasts

DFMark outputs the time to run any given test, so this is what we use for the output. We loop the small test for as many times possible in 10 minutes, the medium test for as many times in 30 minutes, and the large test for as many times in an hour.

In our largest sub-test, the Intel processors crack on ahead, however comparing AMD gen-on-gen and even the R3 5300G comes out ahead of the previous generation's R7.

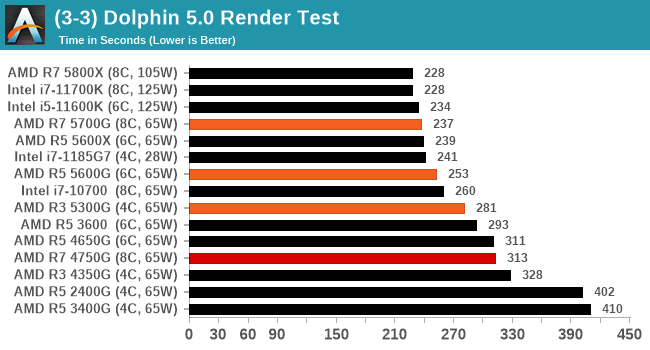

Dolphin v5.0 Emulation: Link

Many emulators are often bound by single thread CPU performance, and general reports tended to suggest that Haswell provided a significant boost to emulator performance. This benchmark runs a Wii program that ray traces a complex 3D scene inside the Dolphin Wii emulator. Performance on this benchmark is a good proxy of the speed of Dolphin CPU emulation, which is an intensive single core task using most aspects of a CPU. Results are given in seconds, where the Wii itself scores 1051 seconds.

Emulation improvement increases are across the board.

Rendering tests, compared to others, are often a little more simple to digest and automate. All the tests put out some sort of score or time, usually in an obtainable way that makes it fairly easy to extract. These tests are some of the most strenuous in our list, due to the highly threaded nature of rendering and ray-tracing, and can draw a lot of power. If a system is not properly configured to deal with the thermal requirements of the processor, the rendering benchmarks is where it would show most easily as the frequency drops over a sustained period of time. Most benchmarks in this case are re-run several times, and the key to this is having an appropriate idle/wait time between benchmarks to allow for temperatures to normalize from the last test.

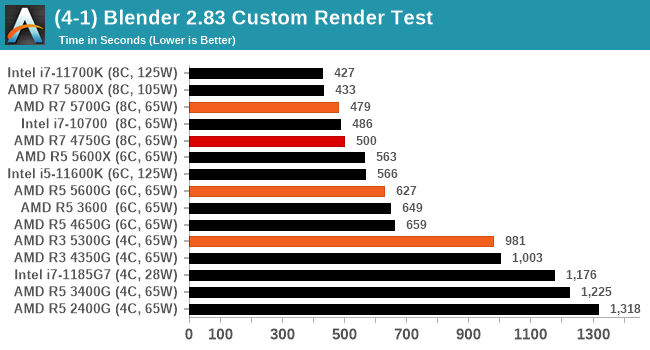

Blender 2.83 LTS: Link

One of the popular tools for rendering is Blender, with it being a public open source project that anyone in the animation industry can get involved in. This extends to conferences, use in films and VR, with a dedicated Blender Institute, and everything you might expect from a professional software package (except perhaps a professional grade support package). With it being open-source, studios can customize it in as many ways as they need to get the results they require. It ends up being a big optimization target for both Intel and AMD in this regard.

For benchmarking purposes, we fell back to one rendering a frame from a detailed project. Most reviews, as we have done in the past, focus on one of the classic Blender renders, known as BMW_27. It can take anywhere from a few minutes to almost an hour on a regular system. However now that Blender has moved onto a Long Term Support model (LTS) with the latest 2.83 release, we decided to go for something different.

We use this scene, called PartyTug at 6AM by Ian Hubert, which is the official image of Blender 2.83. It is 44.3 MB in size, and uses some of the more modern compute properties of Blender. As it is more complex than the BMW scene, but uses different aspects of the compute model, time to process is roughly similar to before. We loop the scene for at least 10 minutes, taking the average time of the completions taken. Blender offers a command-line tool for batch commands, and we redirect the output into a text file.

Blender performance increases generationally are around 4%.

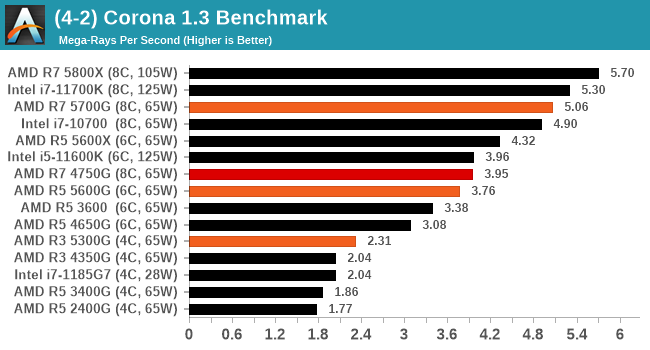

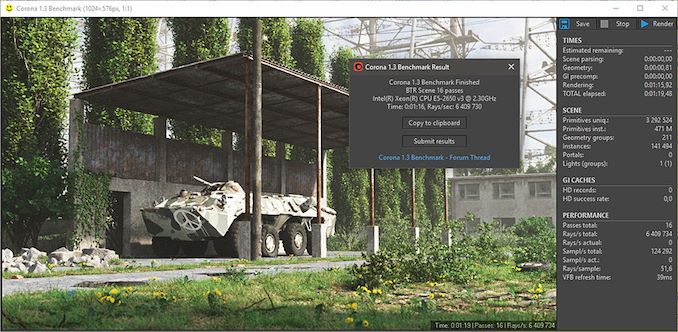

Corona 1.3: Link

Corona is billed as a popular high-performance photorealistic rendering engine for 3ds Max, with development for Cinema 4D support as well. In order to promote the software, the developers produced a downloadable benchmark on the 1.3 version of the software, with a ray-traced scene involving a military vehicle and a lot of foliage. The software does multiple passes, calculating the scene, geometry, preconditioning and rendering, with performance measured in the time to finish the benchmark (the official metric used on their website) or in rays per second (the metric we use to offer a more linear scale).

The standard benchmark provided by Corona is interface driven: the scene is calculated and displayed in front of the user, with the ability to upload the result to their online database. We got in contact with the developers, who provided us with a non-interface version that allowed for command-line entry and retrieval of the results very easily. We loop around the benchmark five times, waiting 60 seconds between each, and taking an overall average. The time to run this benchmark can be around 10 minutes on a Core i9, up to over an hour on a quad-core 2014 AMD processor or dual-core Pentium.

With Corona, the 5700G is +28% over the previous generation. That jump narrows down to +13% with the R3, but we're seeing good yearly improvements.

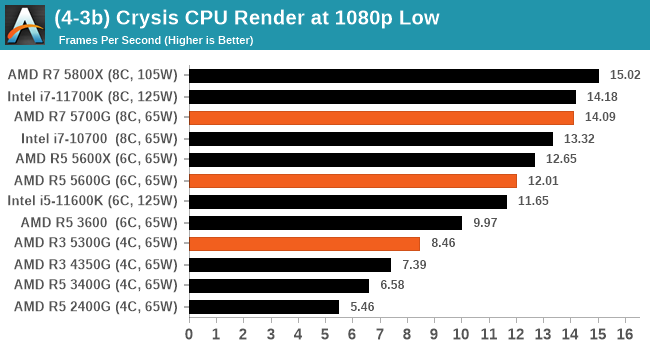

Crysis CPU-Only Gameplay

One of the most oft used memes in computer gaming is ‘Can It Run Crysis?’. The original 2007 game, built in the Crytek engine by Crytek, was heralded as a computationally complex title for the hardware at the time and several years after, suggesting that a user needed graphics hardware from the future in order to run it. Fast forward over a decade, and the game runs fairly easily on modern GPUs.

But can we also apply the same concept to pure CPU rendering? Can a CPU, on its own, render Crysis? Since 64 core processors entered the market, one can dream. So we built a benchmark to see whether the hardware can.

For this test, we’re running Crysis’ own GPU benchmark, but in CPU render mode. This is a 2000 frame test, with medium and low settings.

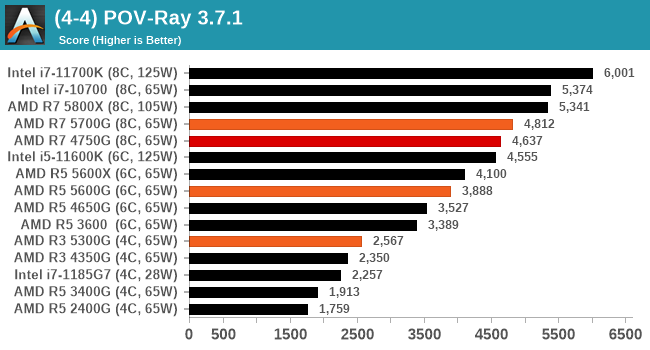

POV-Ray 3.7.1: Link

A long time benchmark staple, POV-Ray is another rendering program that is well known to load up every single thread in a system, regardless of cache and memory levels. After a long period of POV-Ray 3.7 being the latest official release, when AMD launched Ryzen the POV-Ray codebase suddenly saw a range of activity from both AMD and Intel, knowing that the software (with the built-in benchmark) would be an optimization tool for the hardware.

We had to stick a flag in the sand when it came to selecting the version that was fair to both AMD and Intel, and still relevant to end-users. Version 3.7.1 fixes a significant bug in the early 2017 code that was advised against in both Intel and AMD manuals regarding to write-after-read, leading to a nice performance boost.

The benchmark can take over 20 minutes on a slow system with few cores, or around a minute or two on a fast system, or seconds with a dual high-core count EPYC. Because POV-Ray draws a large amount of power and current, it is important to make sure the cooling is sufficient here and the system stays in its high-power state. Using a motherboard with a poor power-delivery and low airflow could create an issue that won’t be obvious in some CPU positioning if the power limit only causes a 100 MHz drop as it changes P-states.

We see +3% performance on the R7, whereas R3/R5 is more akin to +10%.

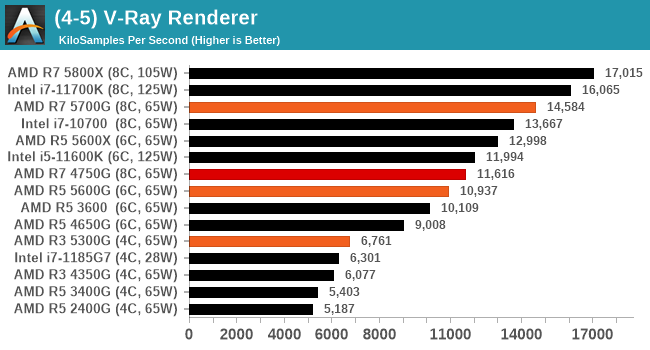

V-Ray: Link

We have a couple of renderers and ray tracers in our suite already, however V-Ray’s benchmark came through for a requested benchmark enough for us to roll it into our suite. Built by ChaosGroup, V-Ray is a 3D rendering package compatible with a number of popular commercial imaging applications, such as 3ds Max, Maya, Undreal, Cinema 4D, and Blender.

We run the standard standalone benchmark application, but in an automated fashion to pull out the result in the form of kilosamples/second. We run the test six times and take an average of the valid results.

With a big +26% jump for the R7 (+21% for R5, +11% for R3), some rendering tasks really like the new processors.

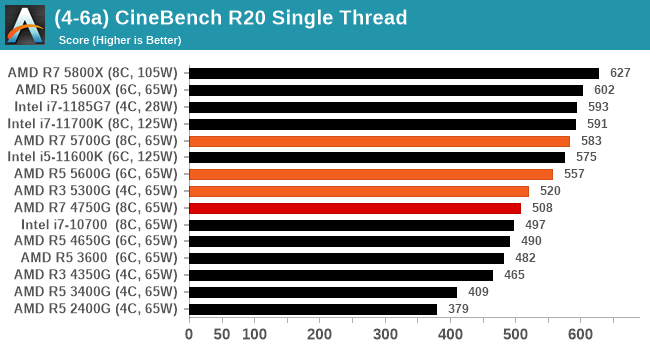

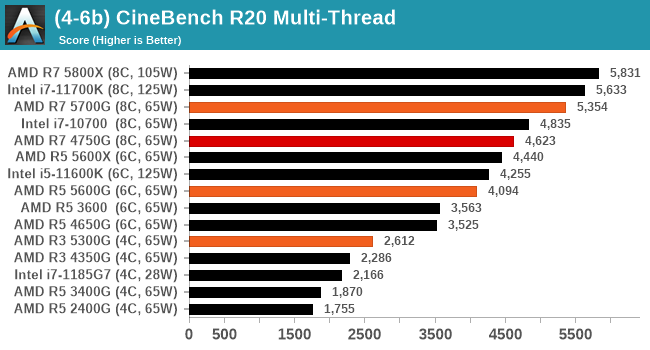

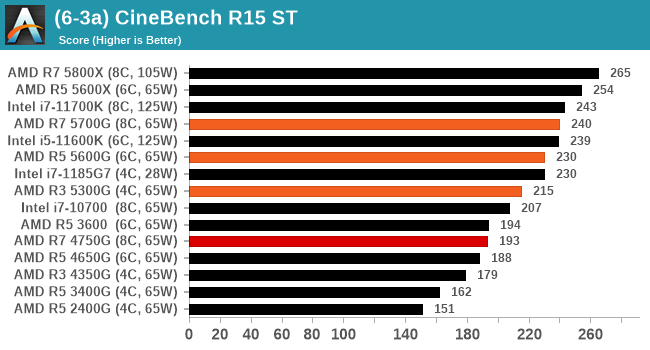

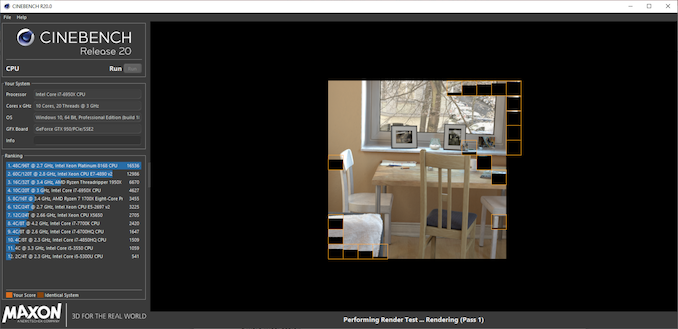

Cinebench R20: Link

Another common stable of a benchmark suite is Cinebench. Based on Cinema4D, Cinebench is a purpose built benchmark machine that renders a scene with both single and multi-threaded options. The scene is identical in both cases. The R20 version means that it targets Cinema 4D R20, a slightly older version of the software which is currently on version R21. Cinebench R20 was launched given that the R15 version had been out a long time, and despite the difference between the benchmark and the latest version of the software on which it is based, Cinebench results are often quoted a lot in marketing materials.

Results for Cinebench R20 are not comparable to R15 or older, because both the scene being used is different, but also the updates in the code bath. The results are output as a score from the software, which is directly proportional to the time taken. Using the benchmark flags for single CPU and multi-CPU workloads, we run the software from the command line which opens the test, runs it, and dumps the result into the console which is redirected to a text file. The test is repeated for a minimum of 10 minutes for both ST and MT, and then the runs averaged.

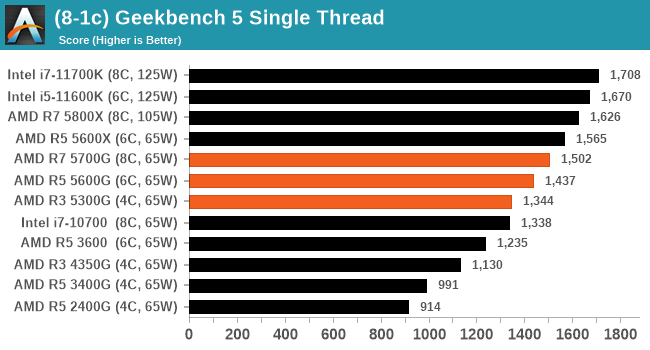

As we saw when Zen 3 first launched, the performance improvements in single thread are quite substantial - even the 5300G is faster than the previous generation's best APU. Compare it back to the R5 3400G, the last retail APU, and it's almost a 50% jump in Cinebench 1T performance.

One of the interesting elements on modern processors is encoding performance. This covers two main areas: encryption/decryption for secure data transfer, and video transcoding from one video format to another.

In the encrypt/decrypt scenario, how data is transferred and by what mechanism is pertinent to on-the-fly encryption of sensitive data - a process by which more modern devices are leaning to for software security.

Video transcoding as a tool to adjust the quality, file size and resolution of a video file has boomed in recent years, such as providing the optimum video for devices before consumption, or for game streamers who are wanting to upload the output from their video camera in real-time. As we move into live 3D video, this task will only get more strenuous, and it turns out that the performance of certain algorithms is a function of the input/output of the content.

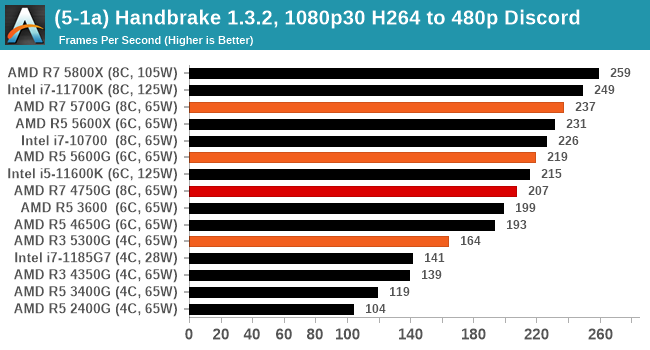

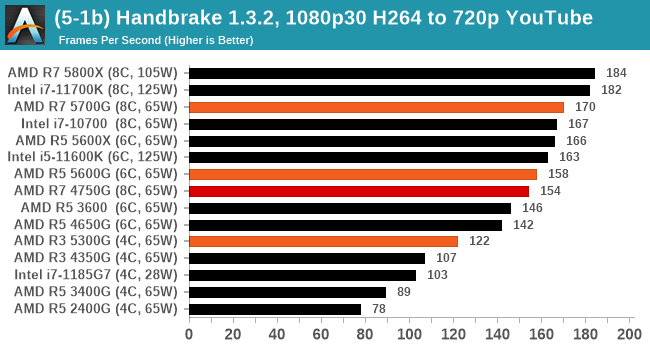

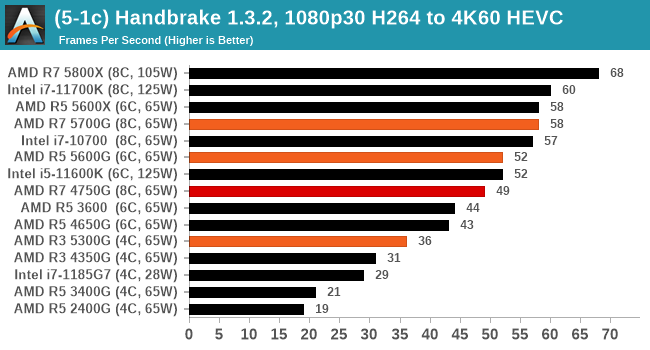

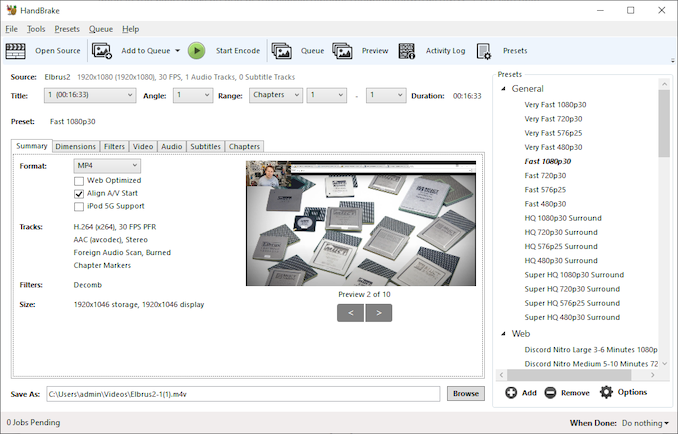

HandBrake 1.32: Link

Video transcoding (both encode and decode) is a hot topic in performance metrics as more and more content is being created. First consideration is the standard in which the video is encoded, which can be lossless or lossy, trade performance for file-size, trade quality for file-size, or all of the above can increase encoding rates to help accelerate decoding rates. Alongside Google's favorite codecs, VP9 and AV1, there are others that are prominent: H264, the older codec, is practically everywhere and is designed to be optimized for 1080p video, and HEVC (or H.265) that is aimed to provide the same quality as H264 but at a lower file-size (or better quality for the same size). HEVC is important as 4K is streamed over the air, meaning less bits need to be transferred for the same quality content. There are other codecs coming to market designed for specific use cases all the time.

Handbrake is a favored tool for transcoding, with the later versions using copious amounts of newer APIs to take advantage of co-processors, like GPUs. It is available on Windows via an interface or can be accessed through the command-line, with the latter making our testing easier, with a redirection operator for the console output.

We take the compiled version of this 16-minute YouTube video about Russian CPUs at 1080p30 h264 and convert into three different files: (1) 480p30 ‘Discord’, (2) 720p30 ‘YouTube’, and (3) 4K60 HEVC.

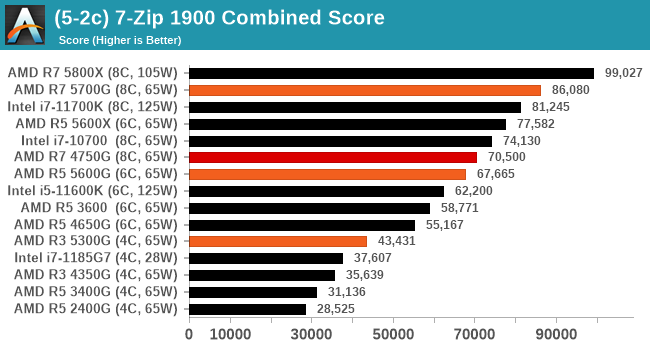

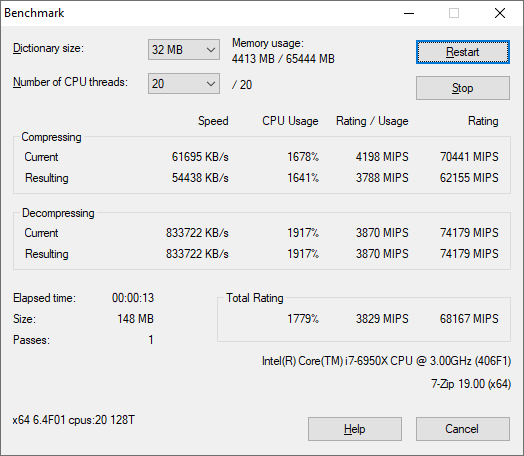

7-Zip 1900: Link

The first compression benchmark tool we use is the open-source 7-zip, which typically offers good scaling across multiple cores. 7-zip is the compression tool most cited by readers as one they would rather see benchmarks on, and the program includes a built-in benchmark tool for both compression and decompression.

The tool can either be run from inside the software or through the command line. We take the latter route as it is easier to automate, obtain results, and put through our process. The command line flags available offer an option for repeated runs, and the output provides the average automatically through the console. We direct this output into a text file and regex the required values for compression, decompression, and a combined score.

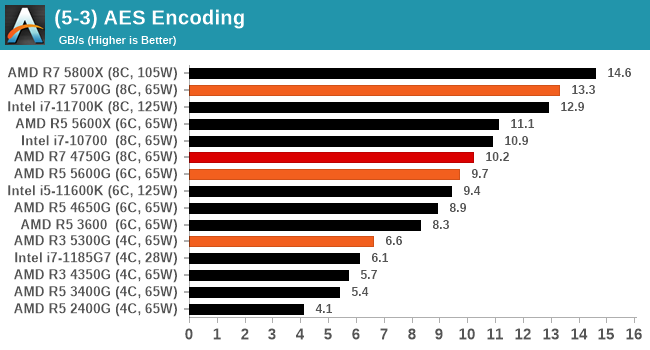

AES Encoding

Algorithms using AES coding have spread far and wide as a ubiquitous tool for encryption. Again, this is another CPU limited test, and modern CPUs have special AES pathways to accelerate their performance. We often see scaling in both frequency and cores with this benchmark. We use the latest version of TrueCrypt and run its benchmark mode over 1GB of in-DRAM data. Results shown are the GB/s average of encryption and decryption.

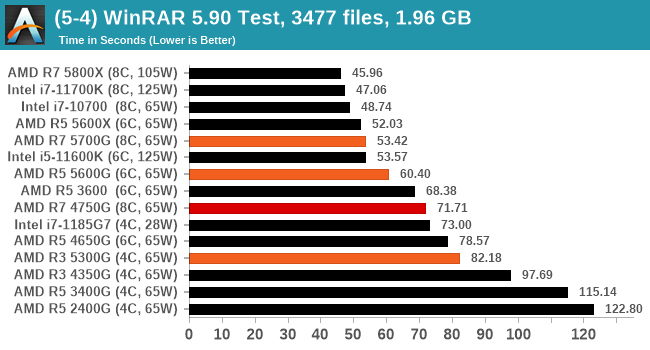

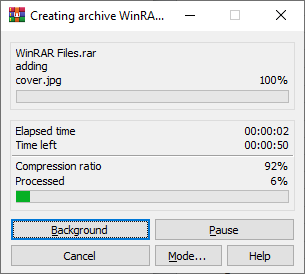

WinRAR 5.90: Link

For the 2020 test suite, we move to the latest version of WinRAR in our compression test. WinRAR in some quarters is more user friendly that 7-Zip, hence its inclusion. Rather than use a benchmark mode as we did with 7-Zip, here we take a set of files representative of a generic stack

- 33 video files , each 30 seconds, in 1.37 GB,

- 2834 smaller website files in 370 folders in 150 MB,

- 100 Beat Saber music tracks and input files, for 451 MB

This is a mixture of compressible and incompressible formats. The results shown are the time taken to encode the file. Due to DRAM caching, we run the test for 20 minutes times and take the average of the last five runs when the benchmark is in a steady state.

For automation, we use AHK’s internal timing tools from initiating the workload until the window closes signifying the end. This means the results are contained within AHK, with an average of the last 5 results being easy enough to calculate.

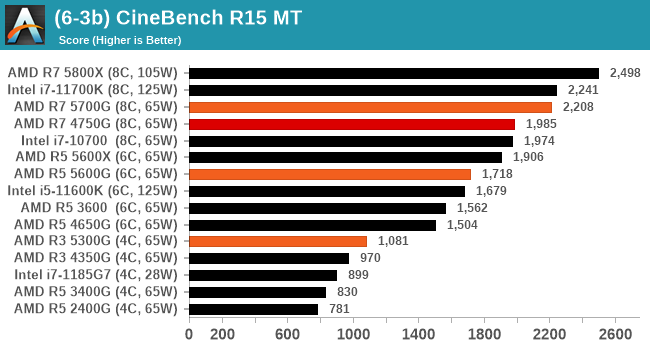

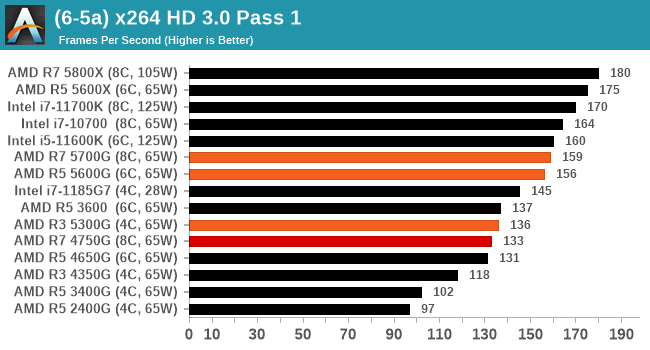

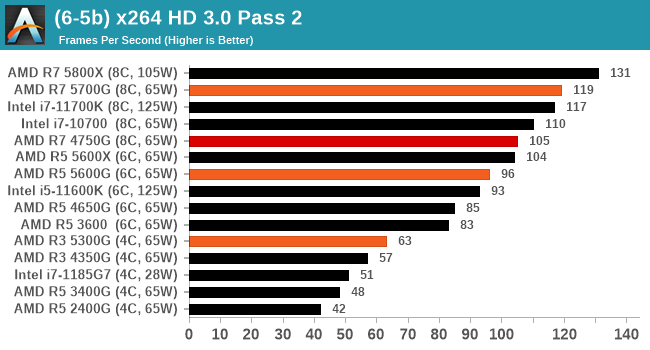

In order to gather data to compare with older benchmarks, we are still keeping a number of tests under our ‘legacy’ section. This includes all the former major versions of CineBench (R15, R11.5, R10) as well as x264 HD 3.0 and the first very naïve version of 3DPM v2.1. We won’t be transferring the data over from the old testing into Bench, otherwise it would be populated with 200 CPUs with only one data point, so it will fill up as we test more CPUs like the others.

The other section here is our web tests.

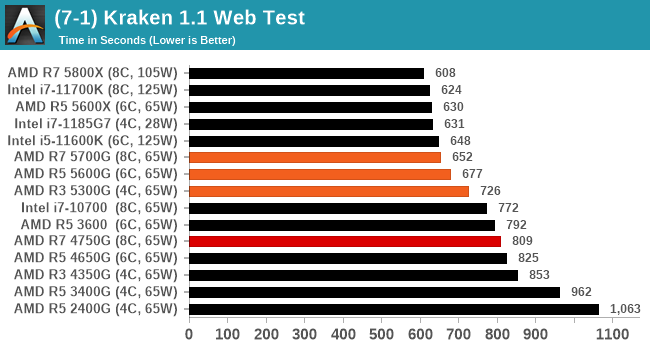

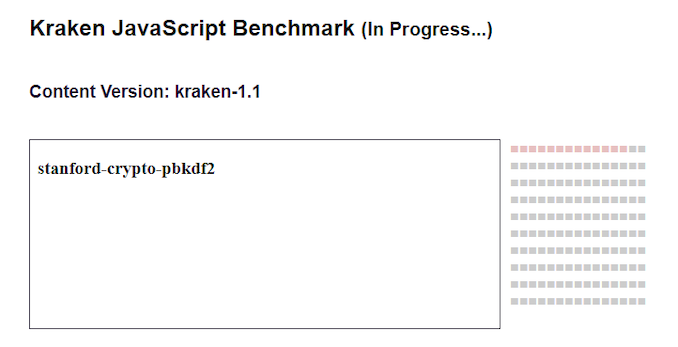

Web Tests: Kraken, Octane, and Speedometer

Benchmarking using web tools is always a bit difficult. Browsers change almost daily, and the way the web is used changes even quicker. While there is some scope for advanced computational based benchmarks, most users care about responsiveness, which requires a strong back-end to work quickly to provide on the front-end. The benchmarks we chose for our web tests are essentially industry standards – at least once upon a time.

It should be noted that for each test, the browser is closed and re-opened a new with a fresh cache. We use a fixed Chromium version for our tests with the update capabilities removed to ensure consistency.

Mozilla Kraken 1.1

Kraken is a 2010 benchmark from Mozilla and does a series of JavaScript tests. These tests are a little more involved than previous tests, looking at artificial intelligence, audio manipulation, image manipulation, json parsing, and cryptographic functions. The benchmark starts with an initial download of data for the audio and imaging, and then runs through 10 times giving a timed result.

We loop through the 10-run test four times (so that’s a total of 40 runs), and average the four end-results. The result is given as time to complete the test, and we’re reaching a slow asymptotic limit with regards the highest IPC processors.

Sizeable single thread improvements.

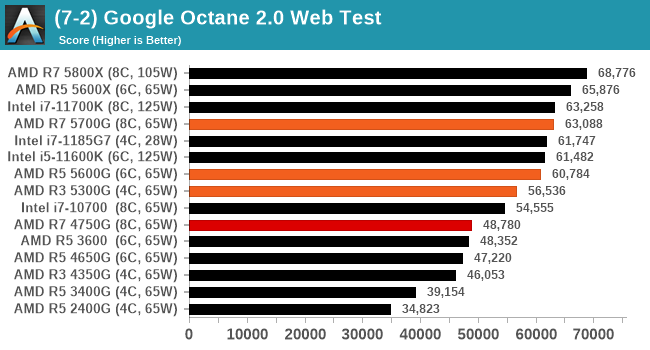

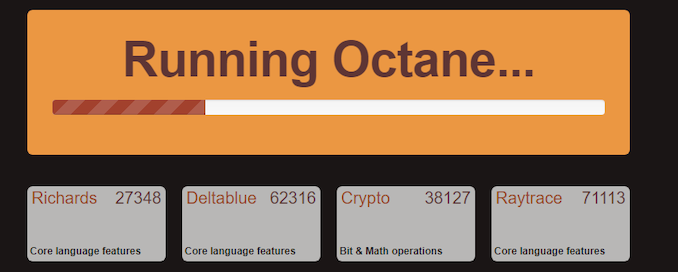

Google Octane 2.0

Our second test is also JavaScript based, but uses a lot more variation of newer JS techniques, such as object-oriented programming, kernel simulation, object creation/destruction, garbage collection, array manipulations, compiler latency and code execution.

Octane was developed after the discontinuation of other tests, with the goal of being more web-like than previous tests. It has been a popular benchmark, making it an obvious target for optimizations in the JavaScript engines. Ultimately it was retired in early 2017 due to this, although it is still widely used as a tool to determine general CPU performance in a number of web tasks.

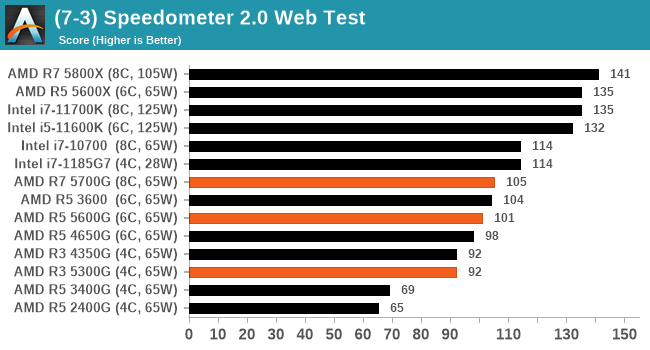

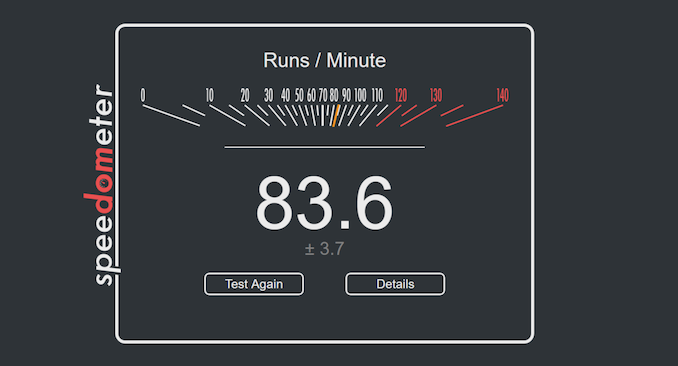

Speedometer 2: JavaScript Frameworks

Our newest web test is Speedometer 2, which is a test over a series of JavaScript frameworks to do three simple things: built a list, enable each item in the list, and remove the list. All the frameworks implement the same visual cues, but obviously apply them from different coding angles.

Our test goes through the list of frameworks, and produces a final score indicative of ‘rpm’, one of the benchmarks internal metrics.

We repeat over the benchmark for a dozen loops, taking the average of the last five.

Most of the people in our industry have a love/hate relationship when it comes to synthetic tests. On the one hand, they’re often good for quick summaries of performance and are easy to use, but most of the time the tests aren’t related to any real software. Synthetic tests are often very good at burrowing down to a specific set of instructions and maximizing the performance out of those. Due to requests from a number of our readers, we have the following synthetic tests.

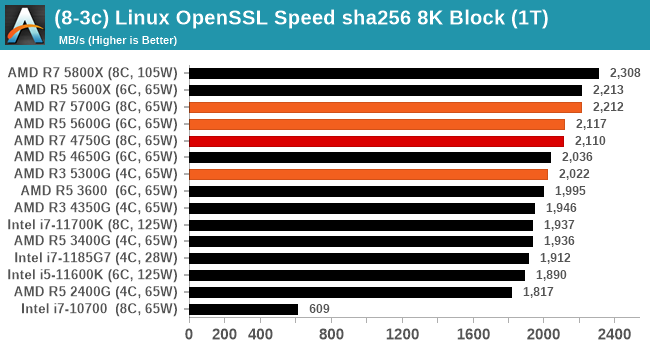

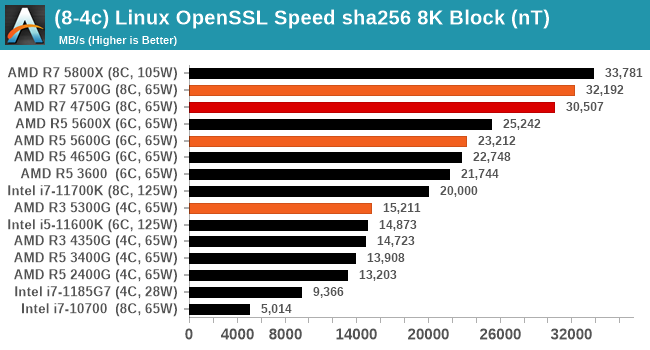

Linux OpenSSL Speed: SHA256

One of our readers reached out in early 2020 and stated that he was interested in looking at OpenSSL hashing rates in Linux. Luckily OpenSSL in Linux has a function called ‘speed’ that allows the user to determine how fast the system is for any given hashing algorithm, as well as signing and verifying messages.

OpenSSL offers a lot of algorithms to choose from, and based on a quick Twitter poll, we narrowed it down to the following:

- rsa2048 sign and rsa2048 verify

- sha256 at 8K block size

- md5 at 8K block size

For each of these tests, we run them in single thread and multithreaded mode. All the graphs are in our benchmark database, Bench, and we use the sha256 results in published reviews.

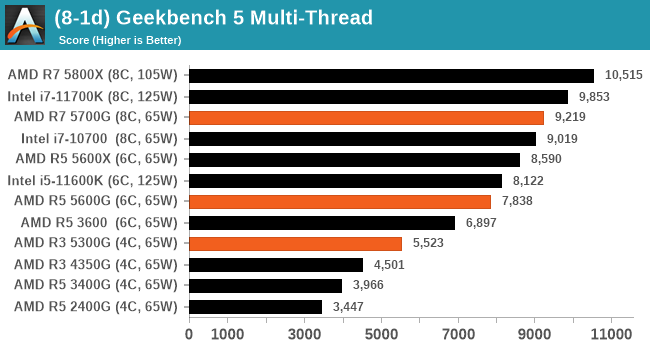

GeekBench 5: Link

As a common tool for cross-platform testing between mobile, PC, and Mac, GeekBench is an ultimate exercise in synthetic testing across a range of algorithms looking for peak throughput. Tests include encryption, compression, fast Fourier transform, memory operations, n-body physics, matrix operations, histogram manipulation, and HTML parsing.

I’m including this test due to popular demand, although the results do come across as overly synthetic, and a lot of users often put a lot of weight behind the test due to the fact that it is compiled across different platforms (although with different compilers).

We have both GB5 and GB4 results in our benchmark database. GB5 was introduced to our test suite after already having tested ~25 CPUs, and so the results are a little sporadic by comparison. These spots will be filled in when we retest any of the CPUs.

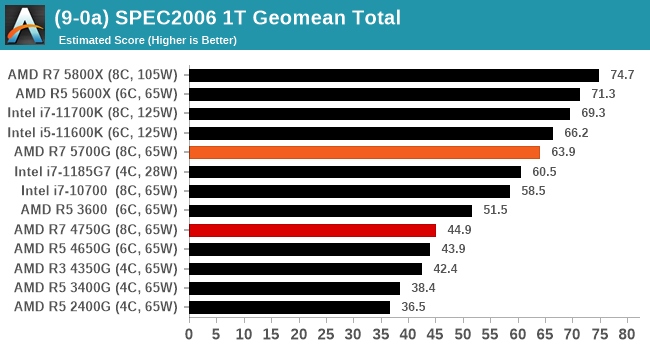

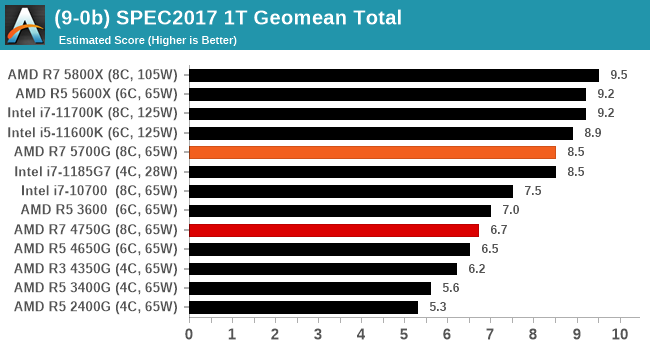

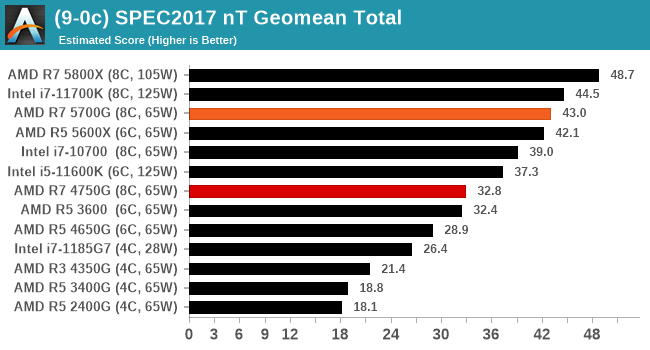

SPEC2017 and SPEC2006 is a series of standardized tests used to probe the overall performance between different systems, different architectures, different microarchitectures, and setups. The code has to be compiled, and then the results can be submitted to an online database for comparison. It covers a range of integer and floating point workloads, and can be very optimized for each CPU, so it is important to check how the benchmarks are being compiled and run.

We run the tests in a harness built through Windows Subsystem for Linux, developed by our own Andrei Frumusanu. WSL has some odd quirks, with one test not running due to a WSL fixed stack size, but for like-for-like testing is good enough. SPEC2006 is deprecated in favor of 2017, but remains an interesting comparison point in our data. Because our scores aren’t official submissions, as per SPEC guidelines we have to declare them as internal estimates from our part.

For compilers, we use LLVM both for C/C++ and Fortan tests, and for Fortran we’re using the Flang compiler. The rationale of using LLVM over GCC is better cross-platform comparisons to platforms that have only have LLVM support and future articles where we’ll investigate this aspect more. We’re not considering closed-sourced compilers such as MSVC or ICC.

clang version 10.0.0

-Ofast -fomit-frame-pointer

-march=x86-64

-mtune=core-avx2

-mfma -mavx -mavx2

Our compiler flags are straightforward, with basic –Ofast and relevant ISA switches to allow for AVX2 instructions. We decided to build our SPEC binaries on AVX2, which puts a limit on Haswell as how old we can go before the testing will fall over. This also means we don’t have AVX512 binaries, primarily because in order to get the best performance, the AVX-512 intrinsic should be packed by a proper expert, as with our AVX-512 benchmark. All of the major vendors, AMD, Intel, and Arm, all support the way in which we are testing SPEC.

To note, the requirements for the SPEC licence state that any benchmark results from SPEC have to be labelled ‘estimated’ until they are verified on the SPEC website as a meaningful representation of the expected performance. This is most often done by the big companies and OEMs to showcase performance to customers, however is quite over the top for what we do as reviewers.

For each of the SPEC targets we are doing, SPEC2006 1T, SPEC2017 1T, and SPEC2017 nT, rather than publish all the separate test data in our reviews, we are going to condense it down into a few interesting data points. The full per-test values are in our benchmark database.

We’re still running the tests for the Ryzen 5 5600G and Ryzen 3 5300G, but the Ryzen 7 5700G scores strong.

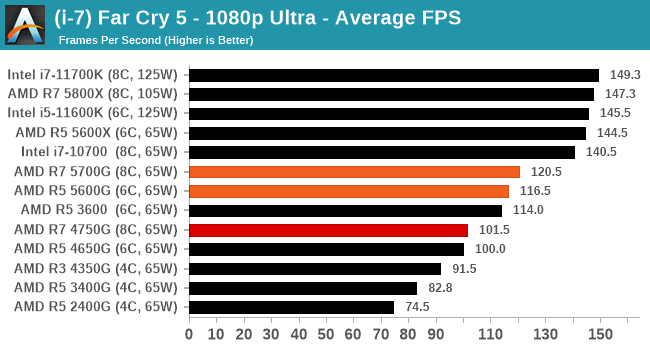

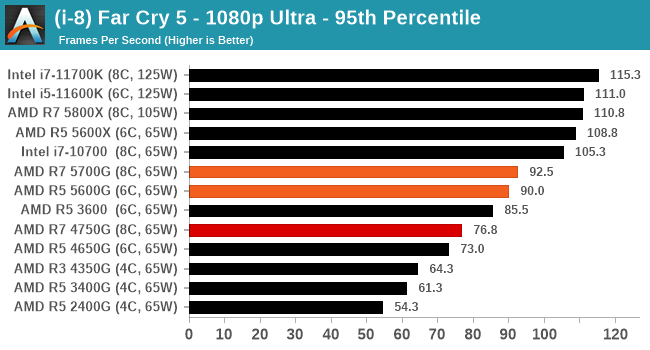

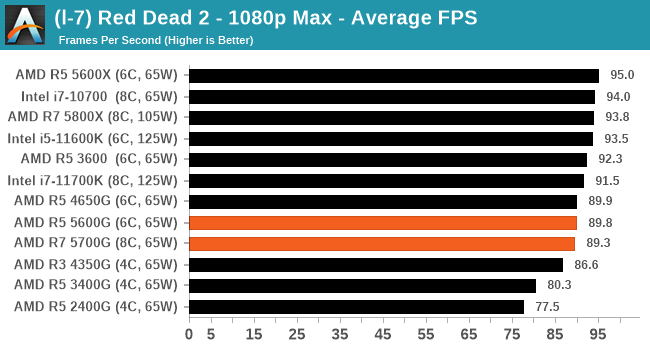

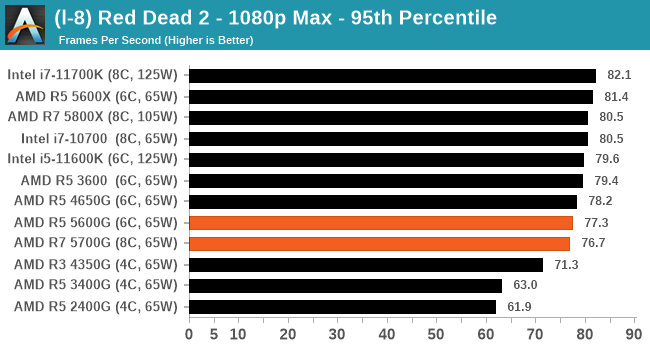

1080p Max with RTX 2080 Ti

The last generation flagship GPU might be considered a bit overkill for 1080p gaming, however when we start cranking up settings, we end up crossing the realm from high refresh rate gaming down to regular gaming, and the CPU can end up being the bottleneck here. It provides an interesting set of results.

A full list of results at various resolutions and settings can be found in our Benchmark Database.

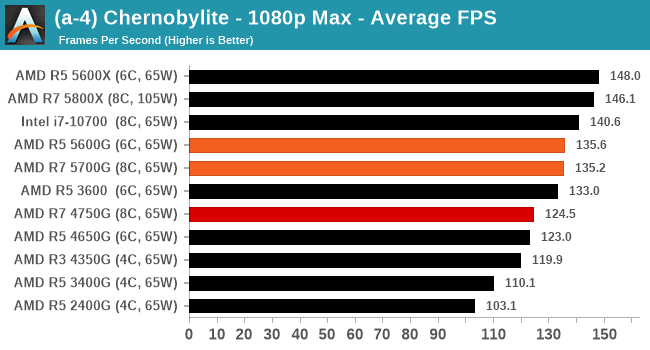

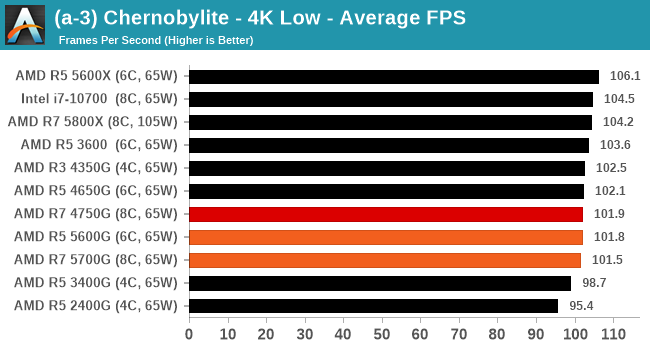

Generation on generation, we're getting a small bump in Chernobylite.

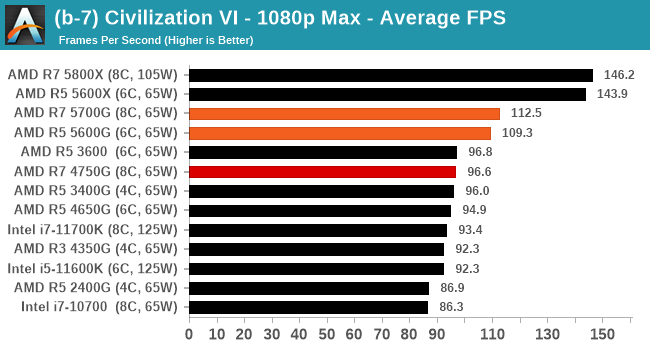

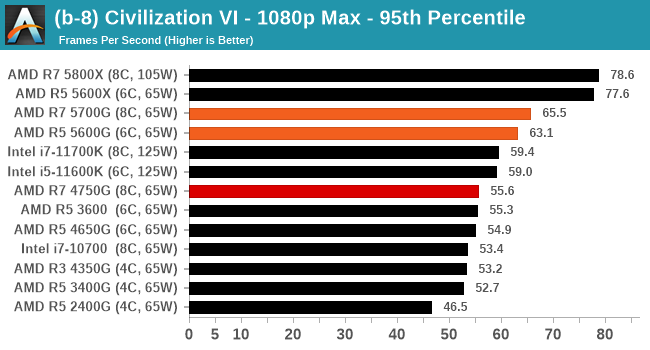

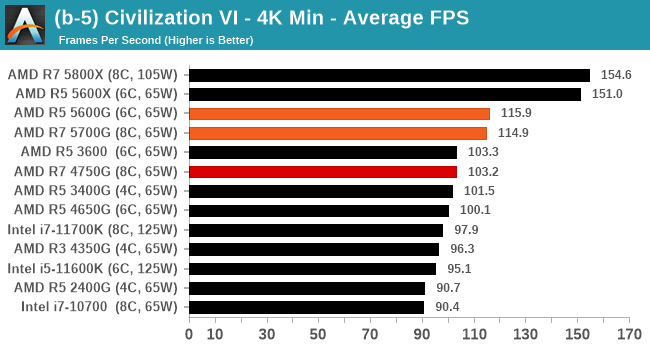

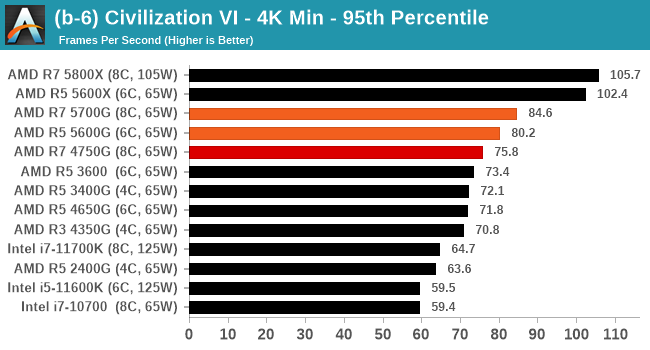

One of the critical elements here is that Civilization 6 likes the Zen 3 cores, but only when there's enough L3 cache to go around.

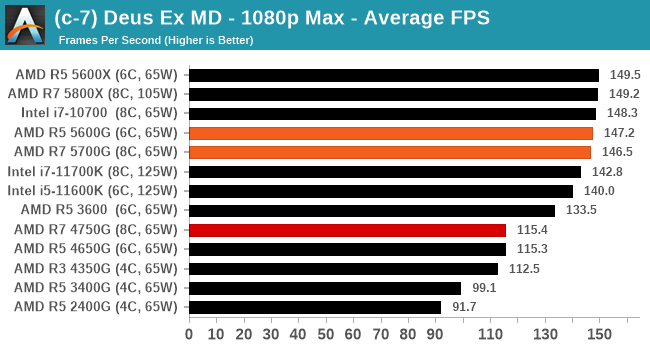

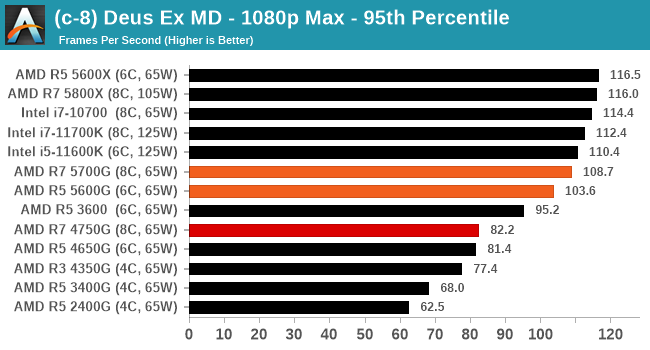

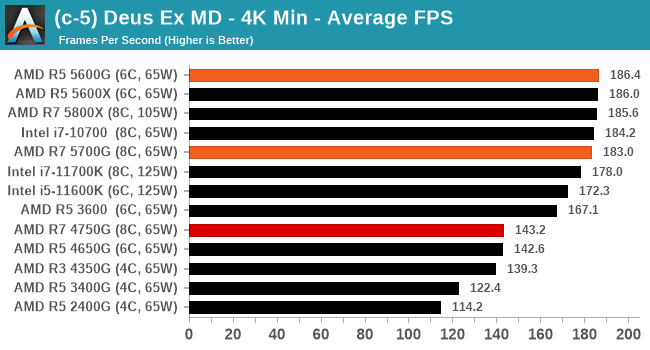

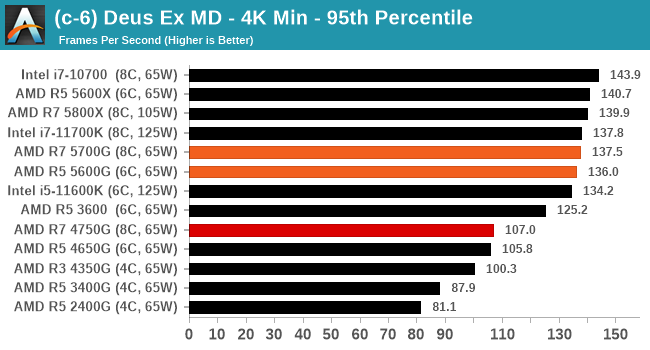

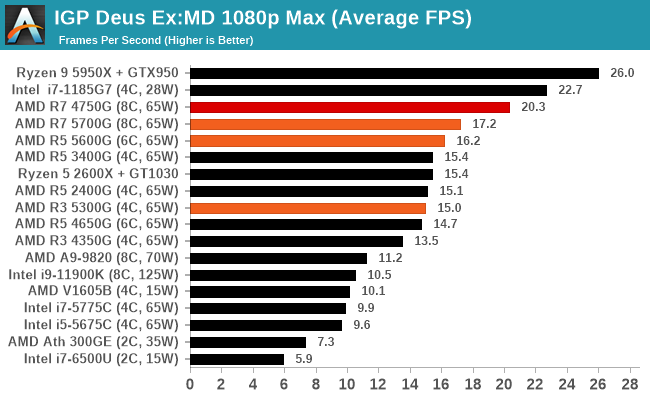

Deus Ex gets a sizeable uplift with the new APUs over the previous generation.

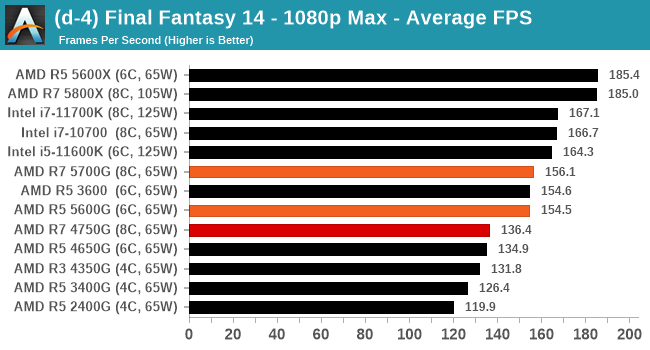

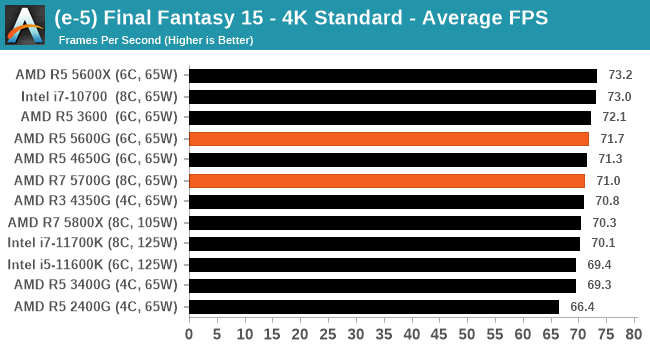

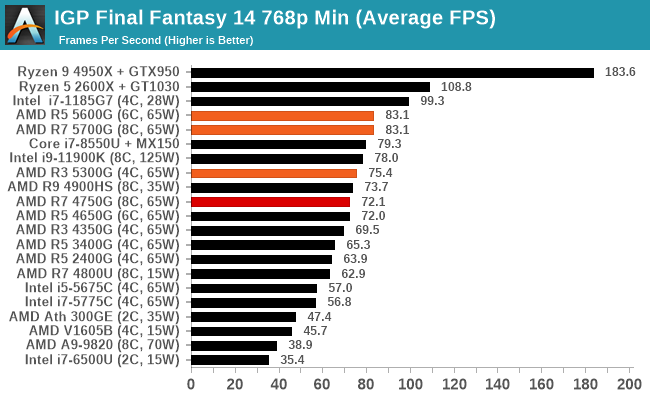

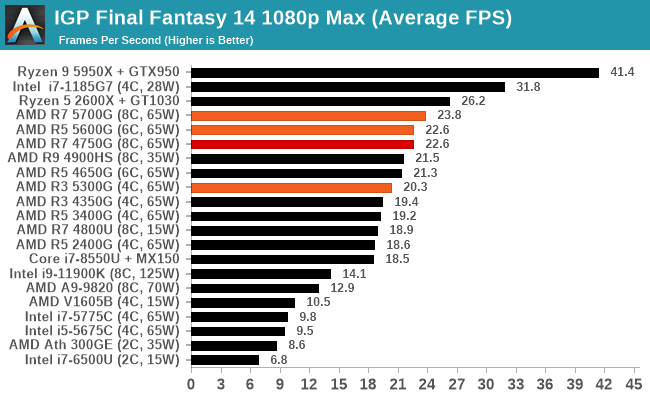

144 Hz on Final Fantasy 14, these chips are ready.

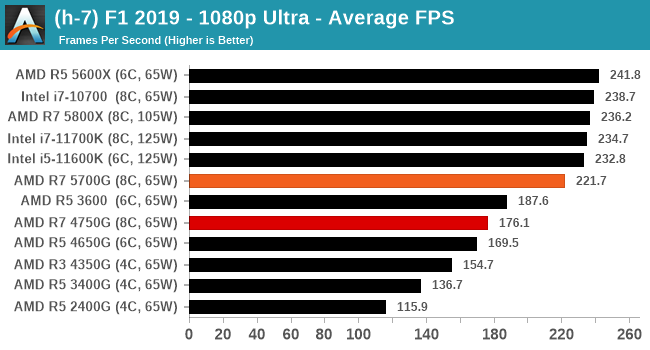

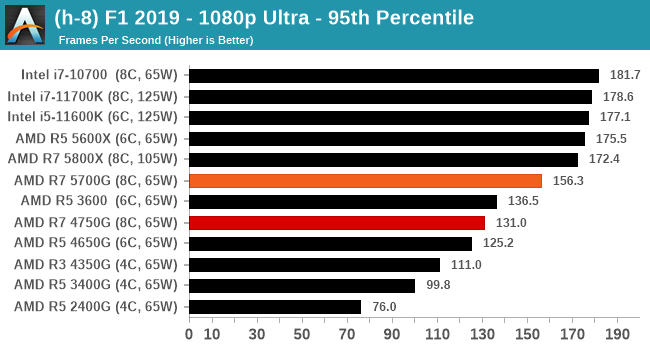

F1 2019 is a game that enjoys the Zen 3 change as well.

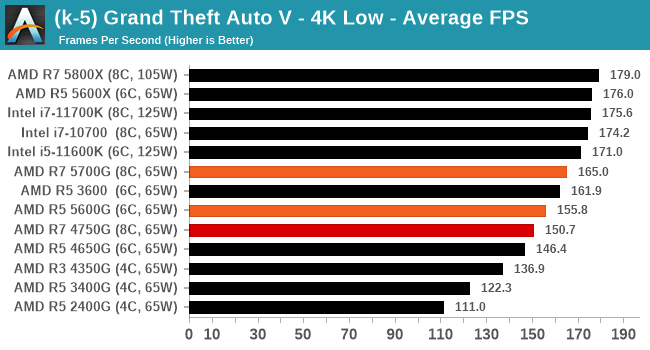

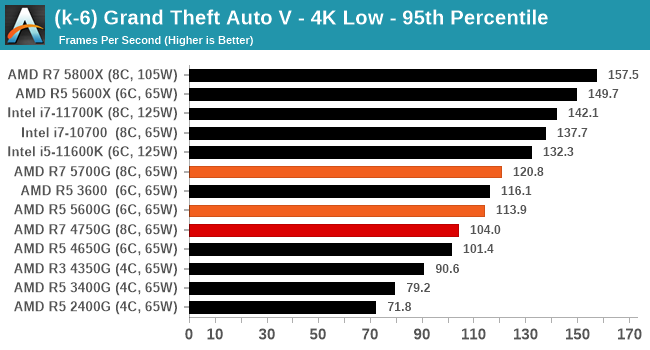

4K Minimum with RTX 2080 Ti

By contrast to 1080p Max, our 4K Minimum testing aims at finding differences between CPUs at a playable resolution. There is still pixels to churn, but the 2080 Ti should at least be hitting 60 FPS in most games here.

A full list of results at various resolutions and settings can be found in our Benchmark Database.

No real change in Chernobylite.

Civilization 6 gets a smaller increase in performance here than the 1080p Maximum test, but there's still a benefit over the previous generation. That being said, the AMD desktop CPUs with more cache pull ahead a lot here.

Deus Ex seems to come to an asymptotic limit, and while the 4000G APUs were behind the curve, the 5000G APUs are solidly there.

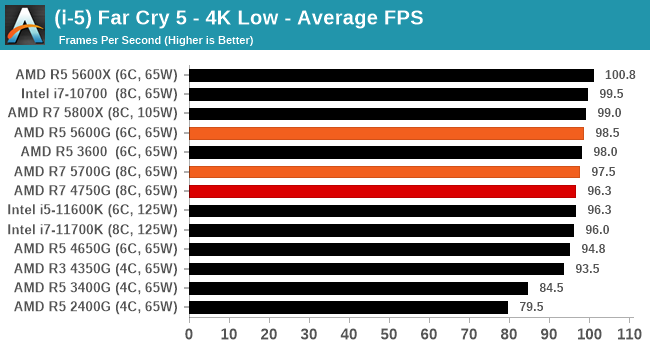

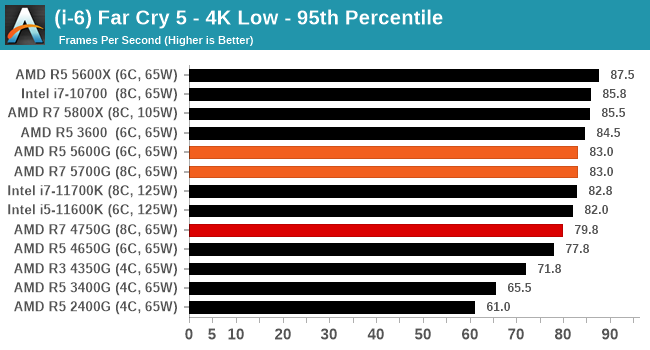

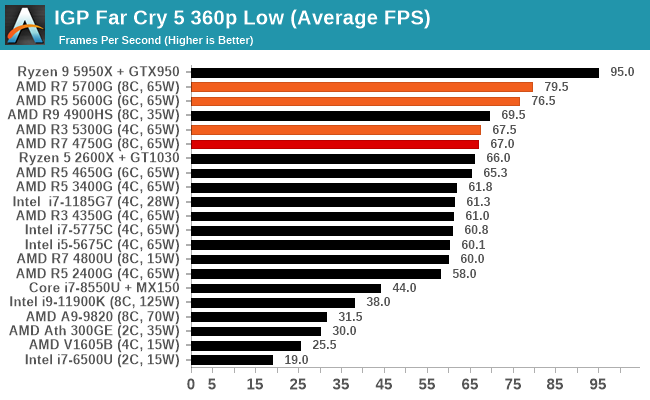

All CPUs pretty much hit a limit in FF15 above and Far Cry 5 below.

GTA 5 hits an odd glitchy mess around 180 FPS, and the new 5000G CPUs can push the RTX 2080 Ti in that direction a bit further - at this point it's probably best to start cranking up some detail to avoid it.

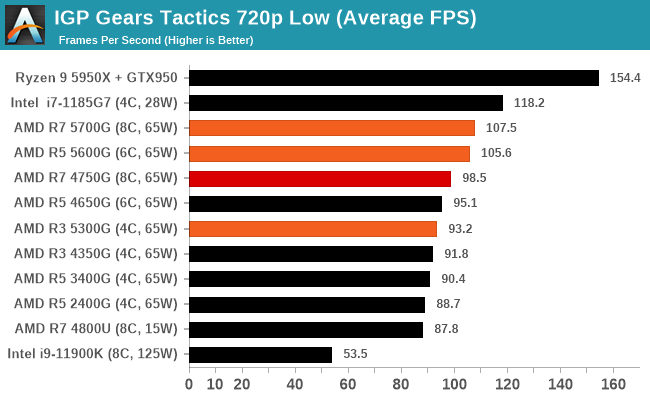

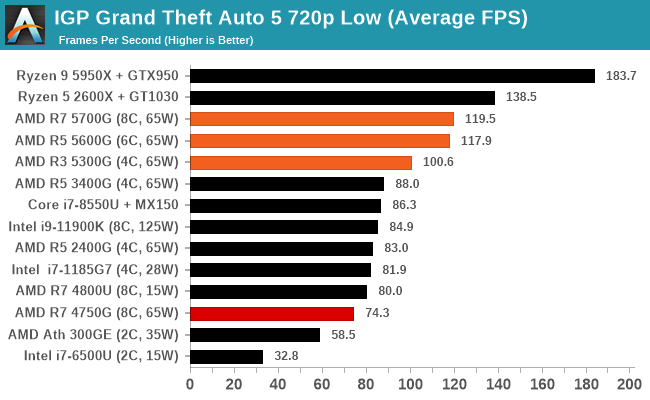

Finding 60+ FPS

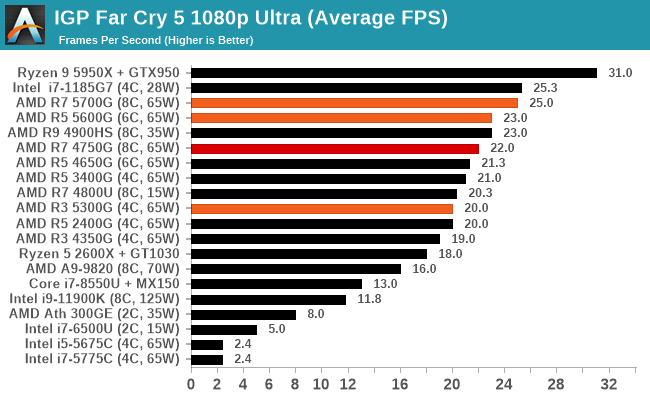

Never mind 30 frames per second, if we want gaming to be smooth, we look for true 60 FPS gaming. It's going to be a benchmark for any integrated graphics solution, but one question is if games are getting more difficult to render faster than integrated graphics is improving. Given how we used to talk about 30-40 FPS on integrated graphics before Ryzen, it stands to reason that the base requirements of games is only ever getting worse. To meet that need, we need processors with a good level of integrated oomph.

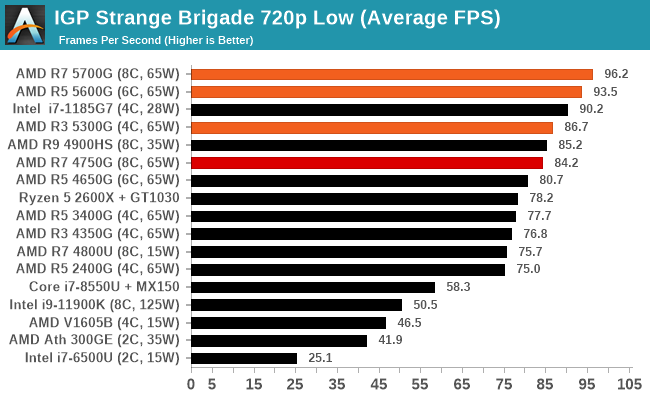

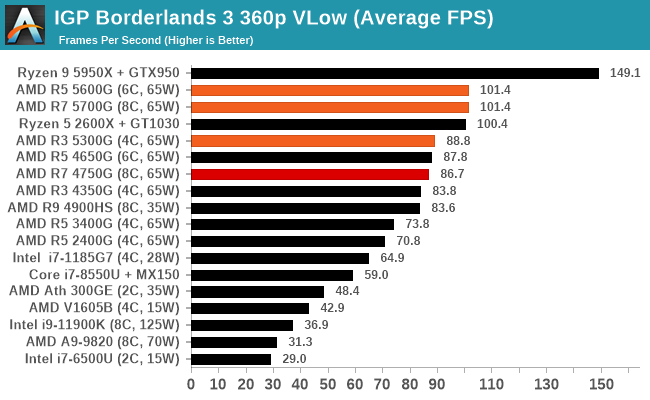

So here are a series of our tests that meet that mark. Unfortunately most of them are 720p Low (or worse).

A full list of results at various resolutions and settings can be found in our Benchmark Database.

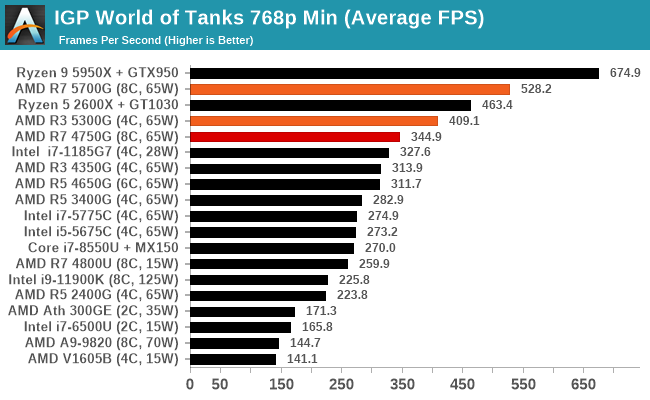

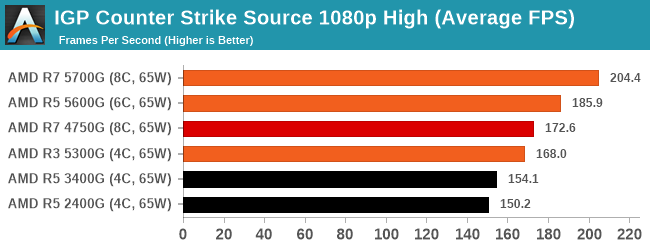

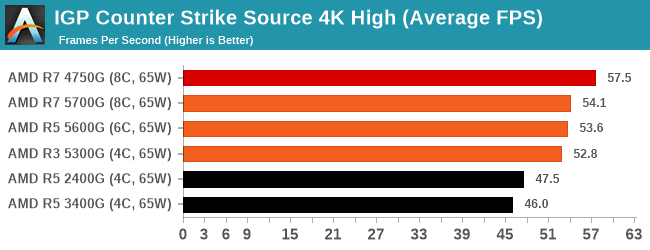

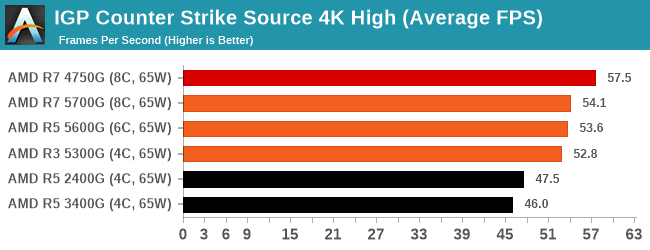

These last couple of games here, World of Tanks and CS:Source are getting on in age a bit. Playing at 1080p High/Max on both is easily done, but we cranked Source up to 4K and we're not even getting 60 frames per second. The previous generation R7 even beat out the new APUs here, probably indicating that the previous generation had more power going into the GPU and the new models are balanced towards the CPU cores a bit more. It works in some games clearly, and 1080p resolutions, but not here at 4K.

Is 1080p Max possible?

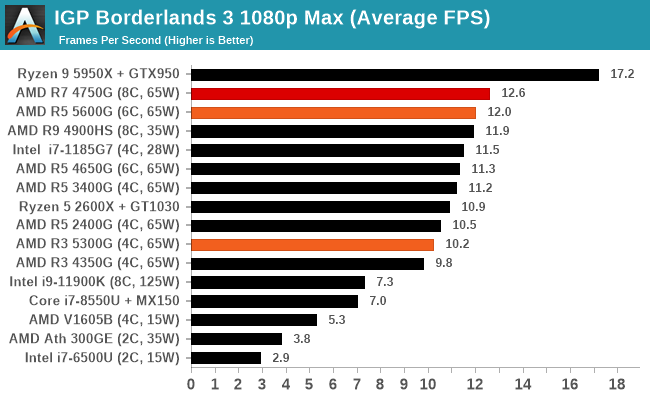

My goal for integrated graphics is for it to be suitable one day for 60 FPS gaming at 1080p maximum settings. At these settings, we're asking a lot of the graphics solution to do lots of compute, sometimes with memory bandwidth that just isn't there. A normal suite does a few of these 1080p Max tests, and the results are almost always dismal:

The short answer is no. You're lucky to break 10 or 20 FPS in most cases.

eSports are the obvious differentiation point, with CS:Source (an old favorite, don't @ me with CS:Go) showcasing almost 60 FPS at 4K High.

Note that on this 1080p High graph, it is interesting to see the frame rates increase over several generations of AMD Ryzen APUs, increasing 33% in frame rate from the first generation Ryzen 5 2400G.

The 4K variation shows the previous generation coming out ahead, and this was fairly consistent. This might be a case of where the power is going between CPU and GPU and the algorithm that determines where the workload should be.

Sometime we get odd scenarios such as this, when the previous generation gets slightly better results. Each result has some level of uncertainty, but even at this value, the're pretty much both unplayable.

A full list of results at various resolutions and settings can be found in our Benchmark Database.

I’m a big fan of a cheap and efficient solution for a PC that performs well and just works. Sometimes when building these systems, the fewer parts the better, as it means less can go wrong, but there is also a desire to ensure the system will last and remain fast during its tenure.

Historically a processor with integrated graphics fit the bill. They used to be a dime a dozen (or up to $150), and when paired with a good small motherboard and a couple of memory modules, an SSD, and a good stock cooler, then someone like my father can browse the web and do office stuff on his 32-inch display and join the weekly family zoom call without having to sit there for the system to respond.

What AMD has here with the new Ryzen 5000G desktop APUs is something that fast. Equipping up to eight Zen 3 cores around 4.0 GHz in a system should cater to almost* everyone’s compute needs. The new 5000G APUs are generationally a really nice improvement in raw compute performance over 4000G, but because 4000G wasn’t really at retail, we’re looking at 3000G, and the new hardware wipes the floor here. The only downside is that AMD didn’t release the cheapest offering.

Only the Ryzen 7 5700G ($359) and Ryzen 5 5600G ($259) are coming to market. Both of these processors are around about the same performance as their desktop variants (5800X and 5600X) while being cheaper, but giving up PCIe 4.0 in exchange for some integrated graphics – it probably won’t be much of a surprise to see these parts in our CPU guides going forward where we would normally recommend the X processors. The problem with these though is that the 5300G isn’t coming to market, at something like $159.

The Ryzen 3 5300G has been a fun processor to test. In every test it surpasses the previous retail APU flagship (the R5 3400G), and even if we compare it to the OEM flagship the R7 4750G, in a few tests it beats it there as well, both in regular performance and in gaming.

So why isn’t AMD selling the Ryzen 3 5300G at retail? Perhaps because it doesn’t need to. Demand for AMD’s regular processor lineup has been strong, and it is only recently where we’re seeing processors like the 5600X and 5800X come back down to MSRP and in stock regularly. AMD makes more profit on those processors (probably), so it would rather sell those. By keeping the 5300G behind, it drives users up to the mid and high-tier 5000G parts, increasing the average selling price and revenue. And in a market where all silicon seems to be being sold, it’s a clever productization tactic. The 5300G at a $159 price point would have a special place in many builds. Until that time, users will have to make do with a 5600G.

The Ryzen 7 5700G and Ryzen 5 5600G go on sale tomorrow, on August 5th 2021.

Do APUs Make Sense For Gaming Yet?

Ever since graphics hardware has been attached to CPU cores, we’ve always wondered: ‘At what point can it be powerful enough to consume the entry-level market?’. Theoretically, year on year, the graphics capabilities of what is in the silicon has improved, and we get speed increases in the same way we see CPU core performance increases. However it is not always the silicon that matters.

Games are also getting more complex. In the same way that every year we get more performance, every year the required specifications for modern games go up. Developers get ambitious, and they want to convert their artistic vision onto a system, and there are often two main targets for those developments: playable on consoles, and the best experience on a super expensive PC. This creates a problem for the lowly integrated graphics solution, because they end up powered well below that of consoles.

A PS5 Processor with Integrated Graphics

Consoles have the added benefit of being a hyper-optimized and well-defined platform: the software stack is built on gaming performance and developers can cater to it. Because integrated graphics can come in many different shapes and colors, we’re relying on some of those optimizations to filter down. Not only that but new technologies such as AMD’s Fidelity SuperFX Resolution are aimed at getting a better experience with less compute power. While game requirements are getting higher, at least the tricks to get better performance are also coming along.

So why not build a big integrated graphics solution for desktops, like a console? First is the market optics – realistically AMD is the main vendor in the console game but also the only vendor taking integrated graphics solutions seriously, so there’s no desire to cross-contaminate each market segment. Second is the market size – a discrete GPU, even one at 75 W, doesn’t have to compete with a joint power budget with a CPU, whereas an integrated solution does, and how many users really want a joint power budget for a main gaming system? One could easily argue that APUs make sense for those on a budget, or someone looking for a smaller system without a discrete card, and not a lot else.

On a budget, you could easily build a Ryzen 5 5600G gaming system with good recommended components for $621, providing you with almost the best integrated gaming experience while at the same time performing near-the-same or beating last generation’s flagship APU in day-to-day tasks. Moving up to Zen 3 with a larger L3 cache has really unlocked more of the performance in these cores and in the graphics.

One of the big questions on the horizon is regarding how AMD might use its 3D V-Cache technology in the future. The current implementation is a 64 MB die that sits on top of the cache in a regular CPU chiplet. That same chiplet won’t work on an APU, but AMD could very much design one in a similar fashion for its integrated graphics – perhaps adding another 32 MB of L3 cache. The question on that then becomes how much extra will it cost, and whether the trade-off is worth it. At a time where discrete graphics solutions are still crazy expensive, it is perhaps not as farfetched as you might think. However, based on AMD’s disclosures, don’t expect a chip like this anytime soon.

https://ift.tt/3lr5chQ

Tecnology

Bagikan Berita Ini

0 Response to "Anandtech: "The AMD Ryzen 7 5700G, Ryzen 5 5600G, and Ryzen 3 5300G Review" - AnandTech"

Post a Comment